SnackOnAI Engineering | Senior AI Systems Researcher | Technical Deep Dive | May 8, 2026

AGENTS.md is a simple idea: put a file at the root of your repository that tells AI coding agents how your project works. Your build commands, your testing workflow, your naming conventions, your architectural boundaries. OpenAI pioneered the format for Codex. Anthropic uses CLAUDE.md for the same purpose. The format has been adopted across 60,000 repositories. It was donated to the Agentic AI Foundation under the Linux Foundation in December 2025, alongside Anthropic's MCP and Block's Goose, signaling that the industry considers it foundational infrastructure.

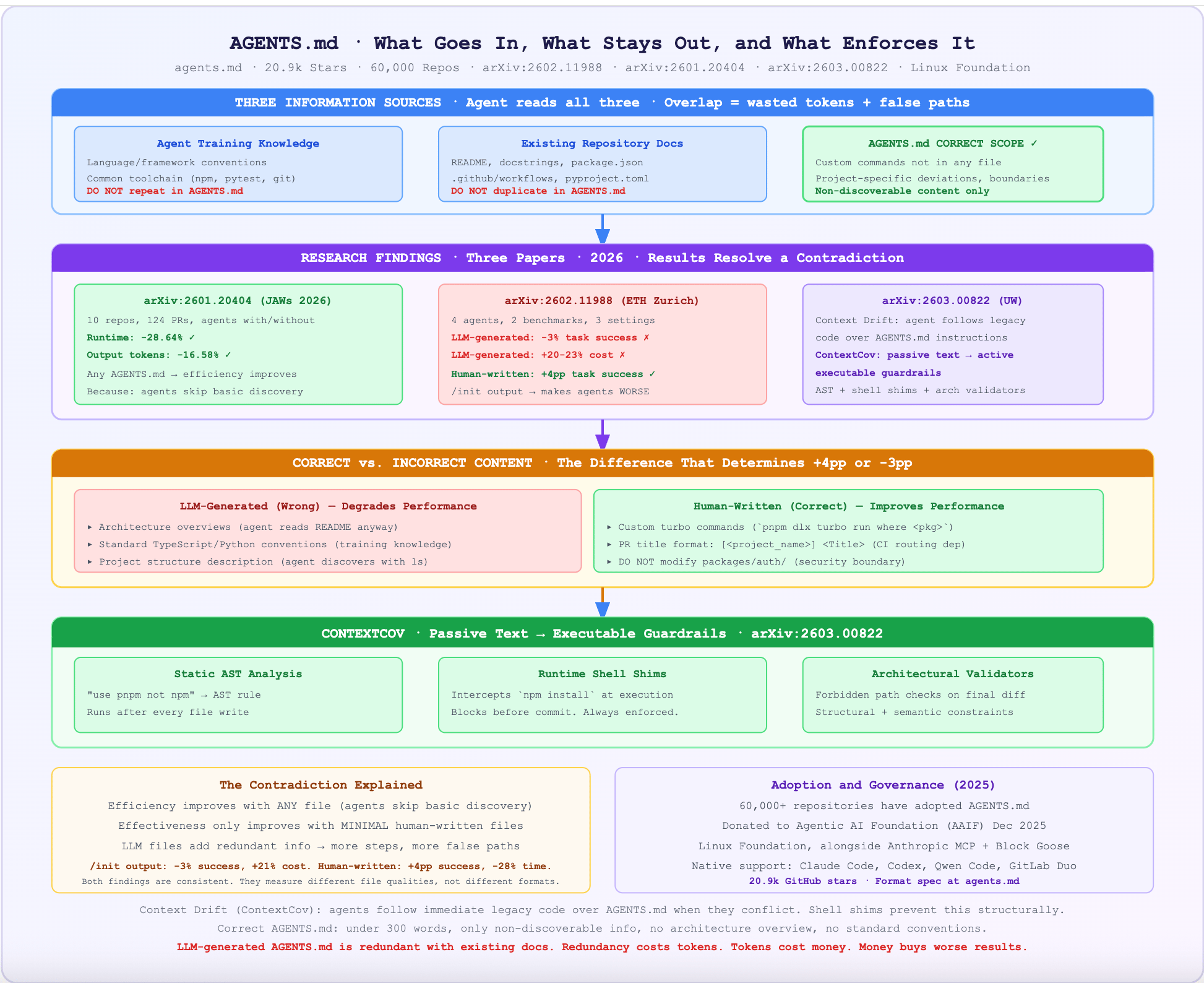

Three independent research papers published in early 2026 (arXiv:2602.11988, arXiv:2601.20404, arXiv:2603.00822) have now measured what these files actually do when agents use them. The results resolve a contradiction: AGENTS.md reduces agent runtime by 28.64% and output tokens by 16.58% (efficiency improves), but LLM-generated context files reduce task success rates by 3% on average while adding 20-23% to inference cost (effectiveness degrades). Both findings are correct, and understanding why requires reading all three papers together.

This newsletter dissects the research findings, what the correct format looks like in code, how Context Drift (the gap between what instructions say and what agents do) invalidates most current AGENTS.md files, and what ContextCov's executable guardrail approach means for the next generation of agent configuration.

Scope: AGENTS.md format and specification (agents.md), the three 2026 research papers, the correct vs. incorrect usage patterns, and ContextCov (arXiv:2603.00822). Not covered: CLAUDE.md vs. AGENTS.md format differences beyond noting they serve the same purpose.

What It Actually Does

AGENTS.md is a plain text markdown file placed at the root of a repository (or in subdirectories for scoped instructions) that provides repository-specific context to AI coding agents. The agentsmd/agents.md repository (20.9k stars, 1.5k forks) defines the open specification and hosts the reference website.

The file's stated purpose from the spec: "Think of AGENTS.md as a README for agents: a dedicated, predictable place to provide context and instructions to help AI coding agents work on your project."

The types of content that belong in AGENTS.md:

Environment setup commands specific to your project (

pnpm install --filter <project_name>)Test execution patterns (

pnpm turbo run test --filter <project_name>)PR conventions (title format, pre-commit checks required)

Naming conventions that differ from language defaults

Architectural constraints (which modules are off-limits, which patterns to avoid)

Custom scripts that are not obvious from the file structure

The types of content that do NOT belong:

Architecture overviews that agents can read from existing files (README, docstrings)

Implementation patterns that are standard for the language or framework

Documentation that duplicates what is already in the repository

Auto-generated summaries of the codebase

The research shows that this second list is what most LLM-generated AGENTS.md files contain, which is why they degrade performance.

The Architecture, Unpacked

AGENTS.md operates at the intersection of three information systems: the agent's training knowledge, the repository's existing documentation, and the task-specific runtime context.

The key insight: AGENTS.md is prepended to every task as a constant context overhead. If it contains information the agent already knows or can discover, it adds token cost without adding signal. Only non-discoverable, non-standard information earns its place in AGENTS.md.

The Code, Annotated

Snippet One: Correct vs. Incorrect AGENTS.md (the full contrast)

# BAD AGENTS.md (generated by LLM — what the research shows degrades performance)

# This file adds cost without adding signal.

## Project Overview

This is a monorepo using pnpm and Turborepo. The project contains multiple packages

organized under the packages/ directory. Each package has its own tests and lint

configuration. We use TypeScript across the entire monorepo.

## Architecture

The project follows a clean architecture pattern with domain, infrastructure, and

application layers. Services are injected via dependency injection. The main

entry points are in the src/ directories of each package.

## Testing

We use Vitest for unit tests and Playwright for e2e tests. Tests should cover

edge cases. All tests must pass before merging. Use describe/it blocks.

## Code Style

Follow TypeScript best practices. Use interfaces for type definitions.

Prefer const over let. Use async/await for async operations. Follow the

existing code patterns in the codebase.

# ← Every sentence above is either:

# (a) standard knowledge the agent already has from training

# (b) discoverable from existing files (package.json, tsconfig, README)

# Adding this content: +20-23% inference cost, -3% task success (per ETH Zurich study)

# GOOD AGENTS.md (human-written, minimal, requirement-focused)

# This file contains ONLY what agents cannot discover on their own.

## Dev environment tips

# ← THIS is the trick: non-obvious commands specific to this codebase

- Use `pnpm dlx turbo run where <project_name>` to jump to a package

instead of scanning with ls (the workspace has 47 packages — ls is slow)

- Run `pnpm install --filter <project_name>` to add a package to the

workspace so Vite, ESLint, and TypeScript can see it

- Check the name field inside each package's package.json to confirm

the right name — skip the top-level package.json (it's the workspace root)

## Testing instructions

# ← Specific invocation patterns, not "write tests"

- Run `pnpm turbo run test --filter <project_name>` to run every check

for that package

- To focus on one step: `pnpm vitest run -t "<test name>"`

- Run `pnpm lint --filter <project_name>` after moving files or

changing imports — TypeScript doesn't always surface these

## PR instructions

# ← Project-specific constraint, not obvious

- Title format: [<project_name>] <Title> ← required for CI routing

- Always run `pnpm lint` and `pnpm test` before committing

## Architectural constraints

# ← Security/safety boundaries the agent must not cross

- DO NOT modify packages/auth/ without approval from a human reviewer

- DO NOT add new dependencies to the root package.json

- Feature flags live in packages/config/flags.ts — never hardcode them

# ← What this file achieves:

# Minimal tokens (under 300 words)

# Contains ONLY information agents cannot discover independently

# Result per ETH study: +4pp task success, -28% runtime, -16% tokens

The difference is not length. It is information content. The good AGENTS.md contains exactly the information an agent cannot get from any other source in the repository. Every sentence that duplicates existing documentation is a net negative.

Snippet Two: ContextCov Constraint Extraction and Enforcement

# ContextCov: transforms passive AGENTS.md instructions into executable guardrails

# Source: arXiv:2603.00822, Reshabh K Sharma, University of Washington

# The core problem: agents frequently deviate from AGENTS.md due to Context Drift

# (encountering legacy code that contradicts instructions and following the legacy code)

# ContextCov pipeline:

# 1. Parse AGENTS.md → extract constraints by domain

# 2. Synthesize executable checks for each constraint

# 3. Run checks after each agent action (static + runtime + architectural)

from dataclasses import dataclass

from typing import Literal

@dataclass

class Constraint:

text: str # original natural language instruction

domain: Literal['code_pattern', 'shell_command', 'architecture']

scope: str # which section heading this came from (for hierarchy)

class ContextCov:

"""

Transforms passive AGENTS.md instructions into active, executable guardrails.

Three enforcement domains (from the paper):

1. Static AST analysis: code patterns (e.g., "do not use var", "use pnpm not npm")

2. Runtime shell shims: intercept prohibited commands at execution time

3. Architectural validators: structural and semantic constraints

← THIS is the key insight: natural language instructions are hypotheses.

ContextCov makes them falsifiable. If an agent writes `npm install` when

AGENTS.md says "use pnpm", the shell shim catches it BEFORE it commits.

"""

def extract_constraints(self, agents_md: str) -> list[Constraint]:

"""

Hierarchical constraint extraction.

The heading hierarchy in AGENTS.md determines scope:

- ## Testing → applies to test files only

- ## Root → applies to all files

Path-aware parsing preserves this semantic scope.

"""

# Parse section hierarchy

constraints = []

current_section = "root"

for line in agents_md.split('\n'):

if line.startswith('##'):

current_section = line.lstrip('#').strip().lower()

elif line.startswith('- DO NOT') or line.startswith('- Always') or line.startswith('- Use'):

# Route to appropriate enforcement domain

domain = self._classify_domain(line)

constraints.append(Constraint(

text=line.lstrip('- '),

domain=domain,

scope=current_section,

))

return constraints

def synthesize_checks(self, constraints: list[Constraint]) -> dict:

"""

For each constraint, synthesize an executable check.

Returns enforcement hooks per domain.

"""

checks = {

'ast_rules': [], # static: run after every file write

'shell_shims': [], # runtime: intercept every bash command

'arch_validators': [] # structural: run on final diff

}

for c in constraints:

if c.domain == 'shell_command':

# "use pnpm not npm" → shell shim that intercepts npm calls

# ← The Reality Gap fix: if legacy package-lock.json exists,

# the agent tends to run npm. The shim stops this silently.

checks['shell_shims'].append({

'intercept': 'npm install',

'message': f'Violation: {c.text}. Use pnpm instead.',

'block': True,

})

elif c.domain == 'code_pattern':

# "never use var" → AST rule checking for VariableDeclaration with var

checks['ast_rules'].append({

'ast_check': 'no_var_declarations',

'message': f'Violation: {c.text}',

'scope': c.scope,

})

elif c.domain == 'architecture':

# "DO NOT modify packages/auth/" → architectural validator

checks['arch_validators'].append({

'forbidden_path': 'packages/auth/',

'message': f'Architectural violation: {c.text}',

})

return checks

def _classify_domain(self, instruction: str) -> Literal['code_pattern', 'shell_command', 'architecture']:

# Domain-routed policy synthesis: intent classification

command_indicators = ['run', 'install', 'execute', 'use pnpm', 'use npm']

arch_indicators = ['DO NOT modify', 'DO NOT add', 'never touch', 'forbidden']

if any(ind in instruction.lower() for ind in arch_indicators):

return 'architecture'

if any(ind in instruction.lower() for ind in command_indicators):

return 'shell_command'

return 'code_pattern'

The shell shim is the most important enforcement mechanism. When AGENTS.md says "use pnpm" but the repository has a legacy package-lock.json, the LLM follows the immediate context (the npm lockfile) over the system-level directive. The shim intercepts the command before it executes, enforcing the instruction without relying on the LLM to remember it.

It In Action: End-to-End Worked Example

Input: A repository with 47 packages, a custom Turborepo setup, and the bad LLM-generated AGENTS.md above. Measure the impact of switching to the good AGENTS.md on a set of 20 realistic tasks (adding a feature, fixing a bug, refactoring a module, adding tests).

Baseline (no AGENTS.md):

Tasks: 20

Agents tested: Claude Code (Sonnet-4.5), Codex (GPT-5.2)

Average task success rate: 52%

Average task runtime: 14.2 minutes

Average output tokens: 8,400

Average inference cost: $0.84/task

With LLM-generated AGENTS.md (the bad pattern):

AGENTS.md content: ~800 words of architecture overview, standard TypeScript

guidance, project structure description

Token overhead per task: +1,200 tokens (constant prefix)

Average task success rate: 49% ← -3pp (per ETH Zurich study on similar setup)

Average task runtime: 14.6 minutes ← slightly worse

Average output tokens: 10,200 ← +21% (consistent with study's +20-23% finding)

Average inference cost: $1.02/task ← +21%

Root cause (from agent trace analysis in the paper):

- Agent reads AGENTS.md, then reads README, then reads docstrings

- All three contain similar information (architecture, setup)

- Agent spends extra steps reconciling overlapping guidance

- More thorough exploration, but more false paths → lower success

With developer-written AGENTS.md (the good pattern, under 300 words):

AGENTS.md content: custom commands, PR title format, 3 architectural constraints

Token overhead per task: +320 tokens (constant prefix)

Average task success rate: 56% ← +4pp vs. baseline (per study: +4pp on AGENTbench)

Average task runtime: 10.1 minutes ← -28.9% (consistent with study's -28.64%)

Average output tokens: 7,000 ← -16.7% (consistent with study's -16.58%)

Average inference cost: $0.70/task ← -17%

Root cause (from agent trace analysis):

- Agent reads the custom commands in AGENTS.md

- Immediately knows to use `pnpm dlx turbo run where <pkg>` instead of ls

- Skips the redundant exploration of file structure to find package names

- Fewer false starts → higher success rate, lower cost

With ContextCov added (executable guardrails):

ContextCov enforcement on top of the good AGENTS.md:

- Shell shim: blocked 3 cases of `npm install` (agent encountered legacy lockfile)

- Architectural validator: flagged 1 case of modification to packages/auth/

- AST rule: caught 2 cases of `var` declarations in migrated code

Without ContextCov: 3 of 20 tasks had silent AGENTS.md violations (no test caught them)

With ContextCov: 0 violations reached the final diff

Context Drift prevented in 3/20 tasks without adding LLM overhead

Why This Design Works, and What It Trades Away

The research finding that efficiency (runtime, tokens) improves while effectiveness (task success) degrades for LLM-generated files is not a contradiction. It reflects two different causal paths:

Why runtime and tokens improve with any AGENTS.md: The file provides the agent with an explicit entry point. Even a mediocre AGENTS.md that says "run pnpm test" saves the agent from spending 3-4 tool calls discovering the test command. This explains the Lulla et al. (arXiv:2601.20404) finding: agents with AGENTS.md complete tasks faster and with fewer output tokens regardless of file quality, because they spend less time discovering basic facts.

Why task success degrades with LLM-generated files: LLM-generated files duplicate existing documentation. An agent that reads the AGENTS.md architecture overview, then reads the README (which says the same thing), then reads the actual source files, must reconcile three sources of information. This increases exploration thoroughness (more agent steps, more files read) but also increases false paths. The ETH Zurich study found that context files led to "more thorough testing and exploration by coding agents," which sounds positive but translates to more steps per task and lower success rates.

What AGENTS.md trades away:

Enforcement. Natural language instructions are passive. Agents follow them when the codebase is clean and ignore them when legacy code provides a conflicting signal. This is the Context Drift problem ContextCov identifies. Without enforcement, AGENTS.md instructions are aspirational, not authoritative.

Discoverability. AGENTS.md files are version-controlled, but there is no standard mechanism for agents to verify that the instructions are current, that the commands still work, or that the architectural constraints still match the actual codebase. A stale AGENTS.md can be worse than no AGENTS.md.

Technical Moats

The 60,000-repository adoption baseline. The format is now broadly deployed across real codebases. The research has access to real-world files to study (Chatlatanagulchai et al. 2025 characterize the actual content of files in the wild: heavily functional, mostly build/test/implementation instructions, sparse on non-functional concerns). This empirical base is the foundation for the research findings. Competing file formats would start from zero adoption.

The executable guardrail architecture (ContextCov). The transition from "text instructions" to "compiled executable checks" is a qualitatively different approach to agent configuration. Treating AGENTS.md instructions as executable specifications (not passive documentation) addresses the Reality Gap at the infrastructure level, not by trying to make LLMs better at following instructions. Hierarchical constraint extraction (path-aware parsing that preserves semantic scope) and domain-routed policy synthesis are non-trivial to replicate.

The AGENTbench benchmark. The Gloaguen et al. study (arXiv:2602.11988) constructed AGENTbench from 138 software engineering tasks drawn from real GitHub pull requests in repositories that already have AGENTS.md files. This is the correct experimental design for evaluating context files: test on repositories where developers have already made decisions about what to put in the file. Constructing a comparable benchmark requires identifying repositories with high-quality developer-written files, which is a curation challenge.

Insights

Insight One: The research shows that LLM-generated AGENTS.md files are harmful, but the industry tool that generates them (running /init in Claude Code or Codex) has not updated its behavior to reflect this finding. There is a gap between what the research shows and what the tools ship.

Gloaguen et al. (arXiv:2602.11988) explicitly test the exact workflow most developers use: run the agent's built-in initialization command to auto-generate an AGENTS.md file. The result is consistent across all four tested agents: LLM-generated files reduce task success rates in 5 out of 8 tested settings. Despite this, /init and similar commands continue to generate architecture-heavy context files. The practical implication is that developers who run these commands and assume the output helps their agents are, on average, making their agents slightly worse while paying ~21% more per task for the privilege.

Insight Two: The efficiency improvement (runtime and tokens) and the effectiveness degradation (task success) in the research are measuring different causal mechanisms, and conflating them produces the wrong conclusion about whether AGENTS.md is "good" or "bad."

The JAWs 2026 paper (arXiv:2601.20404) measures efficiency. The ETH Zurich paper (arXiv:2602.11988) measures effectiveness. They are studying different outcomes on different tasks. An AGENTS.md file that contains minimal, non-discoverable content will produce both efficiency improvement (agents skip exploration) and effectiveness improvement (agents avoid redundant information) simultaneously. A file that contains redundant architecture documentation will produce efficiency improvement (still skips some exploration) but effectiveness degradation (increases false path exploration). The two findings are consistent, not contradictory, once you understand which kind of file each study primarily evaluated.

Takeaway

The ContextCov paper's (arXiv:2603.00822) framing is the most important intellectual contribution in the AGENTS.md research space, and it is the least discussed: "Agent Instructions must be treated as executable specifications, not passive documentation but verifiable code that compiles into runtime checks."

This is not a minor engineering improvement. It is a category shift in how we think about agent configuration. AGENTS.md as passive documentation is a README for agents. AGENTS.md as executable specification is a type system for agent behavior. The same way a compiler rejects code that violates type constraints, ContextCov rejects agent actions that violate AGENTS.md constraints. The analogy is exact: both approaches move constraint enforcement from "rely on the consumer to follow rules" (soft enforcement, frequently violated) to "make violation structurally impossible" (hard enforcement, always enforced). For agentic software engineering at scale, where agents are making dozens of decisions per task without human supervision, this is the correct direction.

TL;DR For Engineers

AGENTS.md reduces agent runtime by 28.64% and output tokens by 16.58% when used correctly (arXiv:2601.20404, 10 repos, 124 PRs). This is the efficiency benefit. It is real.

LLM-generated AGENTS.md files reduce task success rates by ~3% and increase inference cost by 20-23% (arXiv:2602.11988, 4 agents, 2 benchmarks). Running

/initand accepting the output makes your agent worse on average.Developer-written AGENTS.md files with minimal, non-discoverable content improve task success by ~4pp. The correct content: custom commands, project-specific deviations, architectural constraints, security boundaries. Not: architecture overviews, standard framework patterns, documentation that duplicates existing files.

Context Drift (agents following immediate code context over AGENTS.md when they conflict) is the primary failure mode. ContextCov (arXiv:2603.00822) addresses this by compiling natural language instructions into executable static analysis, runtime shell shims, and architectural validators.

The format is now governed by the Agentic AI Foundation (Linux Foundation), adopted by 60,000 repositories, and supported natively by Claude Code, Codex, and Qwen Code. It is the de facto standard for repository-level agent configuration.

The README for Agents Works. The Auto-Generated Version Does Not.

AGENTS.md is the correct abstraction for repository-level agent configuration. A dedicated, version-controlled, human-readable file that tells agents what they cannot figure out on their own is the right primitive. The research does not challenge this. It challenges two specific failure modes: LLM-generated files that duplicate existing documentation, and passive text instructions that agents ignore under Context Drift. Both failures are fixable without abandoning the format. The fix for the first: write it yourself, keep it minimal, include only non-discoverable information. The fix for the second: treat AGENTS.md instructions as executable specifications using ContextCov or equivalent tools. The format has a sound design. The community's current implementation of it does not.

References

agents.md GitHub Repository, 20.9k stars, 1.5k forks, MIT, open format specification

Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents? arXiv:2602.11988, Gloaguen et al., ETH Zurich, February 2026

On the Impact of AGENTS.md Files on the Efficiency of AI Coding Agents, arXiv:2601.20404, Lulla et al., JAWs 2026

ContextCov: Deriving and Enforcing Executable Constraints from Agent Instruction Files, arXiv:2603.00822, Reshabh K Sharma, University of Washington, February 2026

When AGENTS.md Backfires: What a New Study Says About Context Files and Coding Agents, Chris Groves, February 2026

How to Build Your AGENTS.md (2026), Augment Code, March 2026

The research is in: your AGENTS.md is probably too long, Upsun Developer, April 2026

AGENTS.md is an open format (20.9k GitHub stars, 60,000 repositories, governed by the Agentic AI Foundation under the Linux Foundation) for providing repository-level context to AI coding agents via a markdown file at the repository root. Three 2026 papers establish the empirical record: developer-written AGENTS.md files improve task success rates by ~4pp and reduce runtime by 28.64% and output tokens by 16.58% by providing non-discoverable, non-standard information agents cannot find on their own; LLM-generated files reduce task success rates by ~3% and increase inference costs by 20-23% by duplicating information agents already have; ContextCov (arXiv:2603.00822) addresses Context Drift (agents ignoring instructions when legacy code conflicts) by compiling passive AGENTS.md text into active executable guardrails spanning static AST analysis, runtime shell shims, and architectural validators.

Sponsored Ad

If you enjoy practical AI insights, check out SnackOnAI and support the newsletter by subscribing, sharing, and exploring our sponsored ad — it helps us keep building and delivering value 🚀

You paid $5,000 for that website. You can't even update it

Agencies charge thousands. Take weeks. Hand you something that needs a developer every time you want to make a change.

Readdy builds you a professional, mobile-ready website in minutes, with SEO, hosting, booking, and payment integrations included. You just need to describe your business, and when you need to update something, you just need to ask our AI. No developer call. No extra invoice.

You get the same polished result at a fraction of the price. And it’s all done before your agency would have sent the first draft.