SnackOnAI Engineering | Senior AI Systems Researcher | Technical Deep Dive | 11 April 2026

Everyone obsesses over the model. Bigger context windows, faster attention kernels, flashier benchmarks. But here's what the benchmark leaderboards won't tell you: a 70B model on a poorly orchestrated cluster underperforms a 7B model on a well-designed control plane, at 3x the cost.

AIBrix is built on this exact premise. It's not a better model. It's not a faster kernel. It's the missing orchestration layer that makes everything else stop bleeding money at scale, and the community has been largely sleeping on it.

What It Actually Does

AIBrix (GitHub, Paper) is a cloud-native, open-source infrastructure framework from ByteDance's infrastructure team, now under the vllm-project umbrella. It was designed to answer one question: how do you run dozens, or hundreds, of vLLM instances in production without losing your mind or your GPU budget?

What it is not: a new inference engine. It doesn't touch model weights, attention kernels, or CUDA graphs.

What it actually does:

Manages LoRA adapters dynamically across a fleet of base model pods, without requiring one GPU per adapter

Routes inference requests based on KV cache state, not round-robin blindness

Autoscales on KV cache pressure, not QPS (which is the wrong signal for LLMs)

Provides a distributed KV cache that spans multiple vLLM nodes so prefix computations are not thrown away between requests

Runs a unified sidecar (AI Runtime) so the control plane doesn't need to know whether you're using vLLM, SGLang, or TensorRT-LLM

Supports prefill/decode disaggregation routing natively, as of v0.6.0

The key numbers from the paper: 50% throughput increase and 70% latency reduction from the distributed KV cache alone. The routing optimizations add another 19.2% mean latency reduction and up to 79% P99 latency reduction compared to naive round-robin. The autoscaler beats native Kubernetes HPA by reducing latency 11.5%, increasing token throughput 11.4%, and cutting oscillations 33%.

These are not academic benchmarks. They come from ByteDance's production deployment.

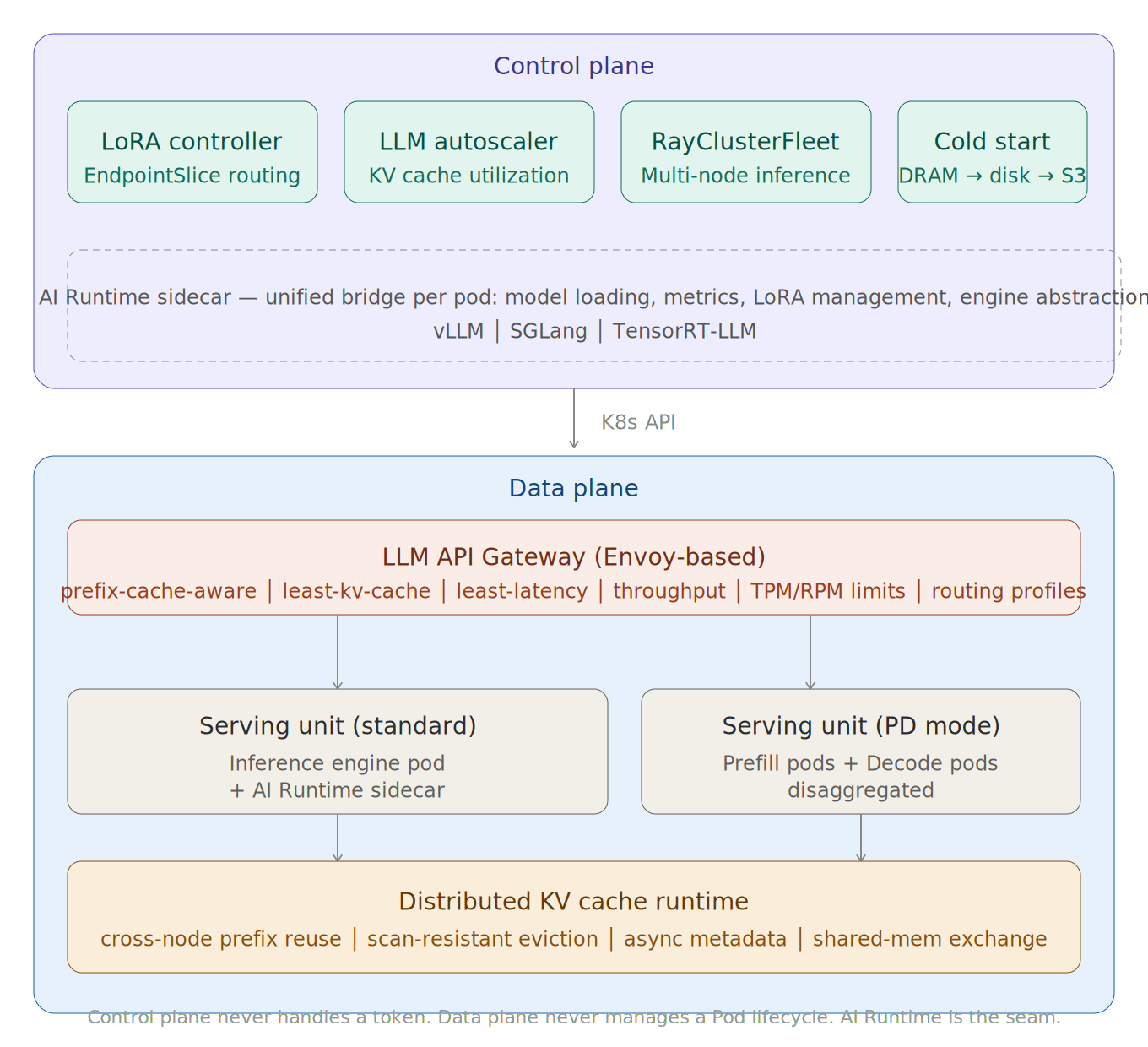

The Architecture, Unpacked

AIBrix separates cleanly into a control plane and a data plane. Here's the full system laid out:

┌─────────────────────────────────────────────────────────────────────────────┐

│ AIBrix CONTROL PLANE │

│ │

│ ┌───────────────────┐ ┌───────────────────┐ ┌────────────────────────┐ │

│ │ LoRA Adapter │ │ LLM-Specific │ │ RayClusterFleet │ │

│ │ Controller │ │ Autoscaler (APA) │ │ Controller │ │

│ │ (K8s EndpointSlice│ │ (KV cache metrics │ │ (multi-node Ray jobs) │ │

│ │ for LoRA routing)│ │ sliding window) │ │ │ │

│ └────────┬──────────┘ └────────┬──────────┘ └────────────────────────┘ │

│ │ │ │

│ ┌────────┴──────────────────────┴──────────────────────────────────────┐ │

│ │ Cold Start Manager │ │

│ │ (tracks model artifacts: DRAM → local disk → cloud storage) │ │

│ └───────────────────────────────────────────────────────────────────────┘ │

└─────────────────────────────┬──────────────────────────────────────────────┘

│ K8s API, gRPC

▼

┌─────────────────────────────────────────────────────────────────────────────┐

│ AIBrix DATA PLANE │

│ │

│ ┌──────────────────────────────────────────────────────────────────────┐ │

│ │ LLM API Gateway (Envoy-based) │ │

│ │ prefix-cache-aware │ least-kv-cache │ least-latency │ throughput │ │

│ │ TPM/RPM rate limiting │ workload isolation │ fairness │ │

│ └───────────┬──────────────────────────────────┬───────────────────────┘ │

│ │ │ │

│ ┌────────▼────────┐ ┌─────────▼────────────────┐ │

│ │ Serving Unit │ │ Serving Unit │ │

│ │ ┌─────────────┐ │ │ ┌──────────────────────┐ │ │

│ │ │vLLM/SGLang/ │ │ │ │ vLLM (PD mode) │ │ │

│ │ │TensorRT-LLM │ │ │ │ Prefill+Decode pods │ │ │

│ │ └─────────────┘ │ │ └──────────────────────┘ │ │

│ │ ┌─────────────┐ │ └──────────────────────────┘ │

│ │ │ AI Runtime │ │ │

│ │ │ (sidecar) │ ◄──── model loading, metrics, LoRA mgmt │

│ │ └─────────────┘ │ │

│ └────────┬────────┘ │

│ │ │

│ ┌────────▼────────────────────────────────────────────────────────┐ │

│ │ Distributed KV Cache Runtime │ │

│ │ (cross-node KV reuse, scan-resistant eviction, │ │

│ │ async metadata, shared-memory data exchange) │ │

│ └─────────────────────────────────────────────────────────────────┘ │

└─────────────────────────────────────────────────────────────────────────────┘

Caption: AIBrix separates orchestration concerns from execution. The control plane never handles a token; the data plane never manages a Pod lifecycle. The AI Runtime sidecar is the seam between them.

I know so here is a better Diagram 🫠

Key architectural decisions to notice:

Kubernetes for coarse-grained scheduling, Ray for fine-grained execution. For multi-node inference (e.g., DeepSeek-R1 full weights), AIBrix doesn't pick one or the other. Kubernetes handles the physical resource reservation and lifecycle; Ray handles the distributed tensor parallelism inside that reservation. This is not a "use Ray instead of K8s" story, it's a "both layers doing what they're actually good at" story.

The AI Runtime sidecar decouples the control plane from engine internals. Each vLLM/SGLang/TensorRT-LLM pod carries a lightweight Go/Python sidecar that exposes a unified interface upward. This means the LoRA controller, autoscaler, and cold start manager all speak one API regardless of the inference engine version underneath.

The gateway is not a generic proxy. It's an extended Envoy Gateway. The extensions implement LLM-specific routing policies that inspect token patterns, KV cache state, and GPU memory pressure before making routing decisions.

The Code, Annotated

Snippet 1: LoRA Adapter Manifest with S3 Delivery (v0.6.0)

# ModelAdapter CRD — how AIBrix registers a LoRA adapter

apiVersion: model.aibrix.ai/v1alpha1

kind: ModelAdapter

metadata:

name: llama3-finance-lora

namespace: production

spec:

baseModel: llama3-8b-instruct # ← ties this adapter to a specific base model fleet

artifactURL: "s3://my-bucket/adapters/finance-v2/" # ← direct S3 path, no initContainer

credentialsSecretRef:

name: aws-s3-credentials # ← controller reads this and passes creds to AI Runtime

# The runtime handles the download asynchronously using asyncio.to_thread,

# so the event loop stays responsive while the adapter is being fetched.

# This is the key improvement in v0.6.0: no blocking I/O on the critical path.

scaling:

minReplicas: 1

maxReplicas: 10

# LoRA-aware routing: Kubernetes EndpointSlice is used to track which

# pods have this adapter loaded. Requests for this model_id are only

# routed to pods in the EndpointSlice. No adapter loaded = no route.

routingStrategy: lora-aware

Caption: The credentialsSecretRef pattern is the trick here. The controller extracts and forwards credentials directly to the AI Runtime sidecar, keeping credential handling out of the inference engine pod spec entirely.

Snippet 2: Routing Profile Definition (v0.6.0 Annotation-based config)

// model.aibrix.ai/config annotation on the Deployment

// This single annotation replaces what used to require separate Deployments

// for different traffic patterns.

{

"defaultProfile": "pd",

"profiles": {

"default": {

"routingStrategy": "random",

// Prompt-length bucketing: short prompts (0-4096 tokens) go to

// standard inference pods. No prefill/decode separation needed.

"promptLenBucketMinLength": 0,

"promptLenBucketMaxLength": 4096

},

"pd": {

// ← THIS is the trick: same pod fleet, different routing brain.

// Long prompts go to prefill-optimized pods. The gateway scores

// candidate pods using load, queue depth, and availability signals

// before selecting. Overflow to standard pods when PD capacity saturates.

"routingStrategy": "pd",

"promptLenBucketMinLength": 0,

"promptLenBucketMaxLength": 2048

},

"low-latency": {

// For interactive use cases: always pick the pod with lowest

// observed latency rather than trying to match prefix caches.

"routingStrategy": "least-latency",

"promptLenBucketMinLength": 0,

"promptLenBucketMaxLength": 2048

}

}

}

# Client side: select routing profile per request via header

import openai

client = openai.OpenAI(

base_url="https://your-aibrix-gateway/v1",

api_key="your-token"

)

# Long document summarization → route to PD-optimized pods

response = client.chat.completions.create(

model="llama3-8b-instruct",

messages=[{"role": "user", "content": long_document_prompt}],

extra_headers={"config-profile": "pd"} # ← single header switches routing brain

)

# Interactive chat → route to lowest-latency pod

response = client.chat.completions.create(

model="llama3-8b-instruct",

messages=[{"role": "user", "content": "What is the capital of France?"}],

extra_headers={"config-profile": "low-latency"}

)

Caption: The config-profile header is the runtime escape hatch. One deployment, multiple routing behaviors. The gateway reads the header and applies the corresponding scoring logic without the client knowing which pod won the selection.

Snippet 3: Autoscaler Deployment with LLM-Specific Metrics

# AIBrix Pod Autoscaler (APA) — replaces K8s HPA for LLM workloads

apiVersion: autoscaling.aibrix.ai/v1alpha1

kind: PodAutoscaler

metadata:

name: llama3-autoscaler

spec:

scaleTargetRef:

name: llama3-8b-instruct

# ← THIS is the critical difference from HPA:

# KV cache utilization is the right signal for LLMs.

# QPS doesn't capture the actual memory pressure that causes OOM or

# queuing. A single 32k-token request can consume as much KV cache

# as 100 short requests.

metrics:

- type: KVCacheUtilization

target:

type: AverageValue

averageValue: "70" # scale out before hitting 70% KV cache usage

# Sliding window aggregation: instead of snapshotting metric every 15s

# (standard K8s), AIBrix maintains a rolling window for real-time load.

# This cuts propagation delay from ~30s (custom metrics path) to ~1s.

slidingWindowSeconds: 10

behavior:

scaleDown:

stabilizationWindowSeconds: 120 # aggressive scale-up, conservative scale-down

scaleUp:

stabilizationWindowSeconds: 0

Caption: KVCacheUtilization at 70% target is the operationally correct threshold. At 80%+, vLLM starts evicting prefix caches, destroying the cache-hit rates your gateway worked hard to build. Scale before you lose the cache.

It In Action: End-to-End Worked Example

Scenario: A legal-tech SaaS deploys llama3-70b-instruct with 12 fine-tuned LoRA adapters (one per practice area) across 8 A100 80GB GPU nodes. Before AIBrix: 12 separate Deployments, each pinned to one LoRA, 8 pods per deployment = 96 pods, each burning a full A100. After AIBrix: 8 pods sharing the base model, LoRA adapters loaded/unloaded dynamically.

Step 1: Request arrives at gateway

POST /v1/chat/completions

Headers: config-profile: low-latency

Body: { model: "llama3-70b-instruct/contracts-lora", messages: [...] }

The gateway parses model to extract contracts-lora as the adapter name. It queries the LoRA adapter EndpointSlice to find pods where contracts-lora is currently loaded.

Step 2: Gateway routing decision

Profile is low-latency, so the scoring logic ranks candidate pods by observed P50 latency. If no pod has contracts-lora loaded AND the adapter cache is full, the AI Runtime sidecar on a target pod evicts the least-recently-used adapter and loads contracts-lora from S3 (async, non-blocking). Worst-case cold-load latency: ~2-4 seconds for a LoRA adapter (not 2-3 minutes for a full model pod spin-up).

Step 3: KV cache lookup

Before the request hits vLLM, the distributed KV cache runtime checks whether the prompt prefix (e.g., a standard legal boilerplate that appears in 80% of requests) is cached across the cluster. Cache hit: skip the prefill computation entirely. On a 32k-token contract prompt where the first 20k tokens are boilerplate, this is a 60%+ prefill savings.

Step 4: Inference execution

vLLM runs decode-only (or shortened prefill) and streams tokens back through the gateway.

Step 5: Autoscaler monitoring

APA samples KV cache utilization across all 8 pods every 10 seconds. If utilization exceeds 70%, a scale-out event triggers. With a pre-warmed model image in node DRAM (tracked by Cold Start Manager), new pod startup is under 30 seconds vs. the 2-3 minute baseline from pulling a 70B model image from scratch.

Measured outcomes (from paper + v0.6.0 release notes):

Metric | Before AIBrix | After AIBrix |

|---|---|---|

GPU pods required for 12 LoRAs | 96 (8 per adapter) | 8 (shared base) |

Prefix cache hit rate (boilerplate-heavy) | 0% (single-node, no cross-pod sharing) | ~60-80% (distributed KV cache) |

Inference latency (P50) | Baseline | -19.2% mean, -70% via KV cache |

Autoscaler oscillation | Baseline HPA | -33% fewer scaling events |

Pod cold start | 2-3 minutes | < 30 seconds (cold start manager) |

Why This Design Works (and What It Trades Away)

Why it works:

LLM inference has three properties that make generic cloud-native stacks fail: requests are stateful (KV cache), variable-cost (short vs. long prompts have order-of-magnitude throughput difference), and adapter-multiplexed (enterprises run many fine-tunes off one base). None of KServe, Knative, or RayServe handle all three. AIBrix was designed from the ground up for exactly these constraints.

The co-design philosophy between the control plane and vLLM is the real moat. When the autoscaler knows the internal structure of vLLM's KV cache, it can scale on the right signal. When the gateway knows which pods have which prefix cached, it can route intelligently. These aren't generic ML platform features bolted onto an LLM. They're purpose-built joints.

What it trades away:

Complexity. Running AIBrix is not simpler than running bare vLLM. You're taking on CRD management, Envoy gateway configuration, distributed cache tuning, and a sidecar in every pod. The ops burden is real. For a team running one or two models at modest scale (< 10 pods), AIBrix is probably premature optimization. For a team running 50+ heterogeneous model deployments across hundreds of GPUs, it's the thing that makes the economics work.

There's also a meaningful lock-in to the Kubernetes ecosystem. If your inference runs on bare metal or in an environment where K8s is second-class, AIBrix's control plane becomes painful. The v0.6.0 standalone gateway mode (runs without K8s) is a partial answer, but the LoRA controller and autoscaler still require K8s.

Technical Moats

What makes this hard to replicate:

The distributed KV cache is the deepest moat. The paper's specific innovations, scan-resistant eviction (prevents popular-but-stale prefixes from monopolizing cache), asynchronous metadata updates (removes synchronization bottlenecks), and shared-memory-based data exchange (zero-copy within a node), are not off-the-shelf components. They required deep integration with vLLM's internal prefix tree structure. A competitor would need to re-implement these at the vLLM API boundary, not at a higher layer.

The EndpointSlice-based LoRA routing mechanism is also non-trivial. Using K8s native machinery (EndpointSlices) to track adapter-to-pod mappings means zero additional state management infrastructure. It's elegant systems design that's hard to replicate without deeply understanding both K8s internals and LLM adapter lifecycles.

Finally, the ByteDance production pedigree matters. AIBrix is not a research prototype. It handles ByteDance's internal LLM serving load, which is one of the largest in the industry. The failure modes it handles (GPU hardware diagnostics, failure mockup tools, rolling upgrades on RayClusterFleet) exist because they hit production. You can't paper over that with a clean open-source implementation.

Insights

Insight 1: AIBrix's biggest competitor is not KServe or RayServe. It's operational chaos.

The real alternative to AIBrix in most enterprises is a pile of Helm charts, custom bash scripts, and a Slack channel called #gpu-fires. The comparison the paper makes to KServe and Knative is technically correct but strategically wrong: those platforms failed at LLM serving because teams hacked around them, not because teams chose a better alternative. AIBrix succeeds if it reduces the toil of that hacky middle state. Whether it does depends almost entirely on how good the documentation and operator UX become, not on the architecture papers.

Insight 2: The routing policy improvements matter more than the distributed KV cache, but get less airtime.

The distributed KV cache's 50% throughput / 70% latency numbers are headline-grabbing. But they require workloads with high prefix overlap (chatbots, document pipelines, RAG with shared system prompts). The routing improvements, 19.2% mean latency reduction and 79% P99 improvement, apply to almost any LLM workload and require no workload-specific tuning. The prefix-cache-aware routing strategy alone, routing requests to pods that have already computed the prefix, is a force multiplier that works whether your cache is local or distributed. This feature is undersold.

Takeaway

The MLA/prefix-cache incompatibility in vLLM reveals exactly why AIBrix exists.

The paper mentions this in passing and it deserves attention: in vLLM v0.7.1, enabling Multi-Head Latent Attention (MLA) for DeepSeek-R1 forces you to disable prefix caching in vLLM. This is a fundamental engine-level constraint. AIBrix's distributed KV cache solves this by operating outside vLLM's internal prefix cache, enabling cross-node KV reuse even when the engine's built-in prefix cache is disabled. This is not a workaround. It's an architectural proof that the orchestration layer can compensate for inference engine limitations, and it's a structural advantage that will matter more as future models introduce more such constraints.

TL;DR For Engineers

AIBrix is a Kubernetes-native orchestration layer for vLLM and other inference engines, built by ByteDance, live in production, with 4.7k GitHub stars and growing.

The distributed KV cache is the headline feature: 50% throughput gain, 70% latency reduction, by sharing prefix computations across pods.

Routing profiles (v0.6.0) let you define multiple routing strategies in one deployment and switch them per-request via a header. This eliminates the "separate cluster per traffic pattern" anti-pattern.

The autoscaler targets KV cache utilization, not QPS. This is the correct signal for LLMs and it beats Kubernetes HPA on every metric.

The operational cost is real. AIBrix is not a weekend setup for a small team. It pays off at 50+ pods, heterogeneous GPU fleets, and multiple LoRA adapters over shared base models.

Control Plane Is the New Moat

The era of "just run vLLM in a Docker container" ends at serious scale. The real question in enterprise LLM serving is not which model, not which attention kernel, but who controls the control plane. AIBrix is a credible, production-proven answer to that question. It's not finished (261 open issues, P/D disaggregation still maturing, and the standalone mode needs more hardening), but it's the most complete open-source answer currently available.

The teams that master control-plane orchestration will run LLMs at half the cost and twice the throughput of teams that don't. That's the actual frontier. AIBrix is a map.

References

AIBrix GitHub Repository — source, issues, PRs, 4.7k stars, 532 forks

AIBrix Paper: arXiv:2504.03648 — primary technical reference

AIBrix v0.6.0 Release Blog — routing profiles, Envoy sidecar, LoRA delivery improvements

KubeCon EU 2025 Keynote: LLM-Aware Load Balancing in Kubernetes — AIBrix + Google co-presentation on gateway routing

KubeCon NA 2025 Keynote: AIBrix Kubernetes-native GenAI Inference Infrastructure — architecture overview

vLLM: Efficient Memory Management for LLM Serving with PagedAttention — arXiv:2309.06180 — the inference engine AIBrix is co-designed with

DeepSeek-V3 Technical Report — arXiv:2412.19437 — context for MLA and why prefix cache interactions matter

gateway-api-inference-extension (Google + AIBrix collaboration) — upstream K8s gateway work

AIBrix is a cloud-native LLM orchestration framework from ByteDance, live under the vllm-project umbrella, that delivers purpose-built control and data plane components for large-scale vLLM deployments. Its distributed KV cache, LLM-aware routing, and KV-cache-driven autoscaler combine to produce 50% throughput gains and 70% latency reductions in production, making it the most complete open-source answer to the LLM serving infrastructure problem currently available.

Sponsored Ad

If you enjoy practical AI insights, check out SnackOnAI and support the newsletter by subscribing, sharing, and exploring our sponsored ad—it helps us keep building and delivering value 🚀

LLM traffic converts 3× better than Google search

58% of buyers now start their research in ChatGPT or Gemini, not Google. Most startups aren't showing up there yet.

The ones that are get cited by the AI tools their buyers, investors, and future hires already use. And they convert at 3×.

Download the free AEO Playbook for Startups from HubSpot and get the exact steps to start showing up. Five minutes to read.