SnackOnAI Engineering | Senior AI Systems Researcher | Technical Deep Dive | April 23, 2026

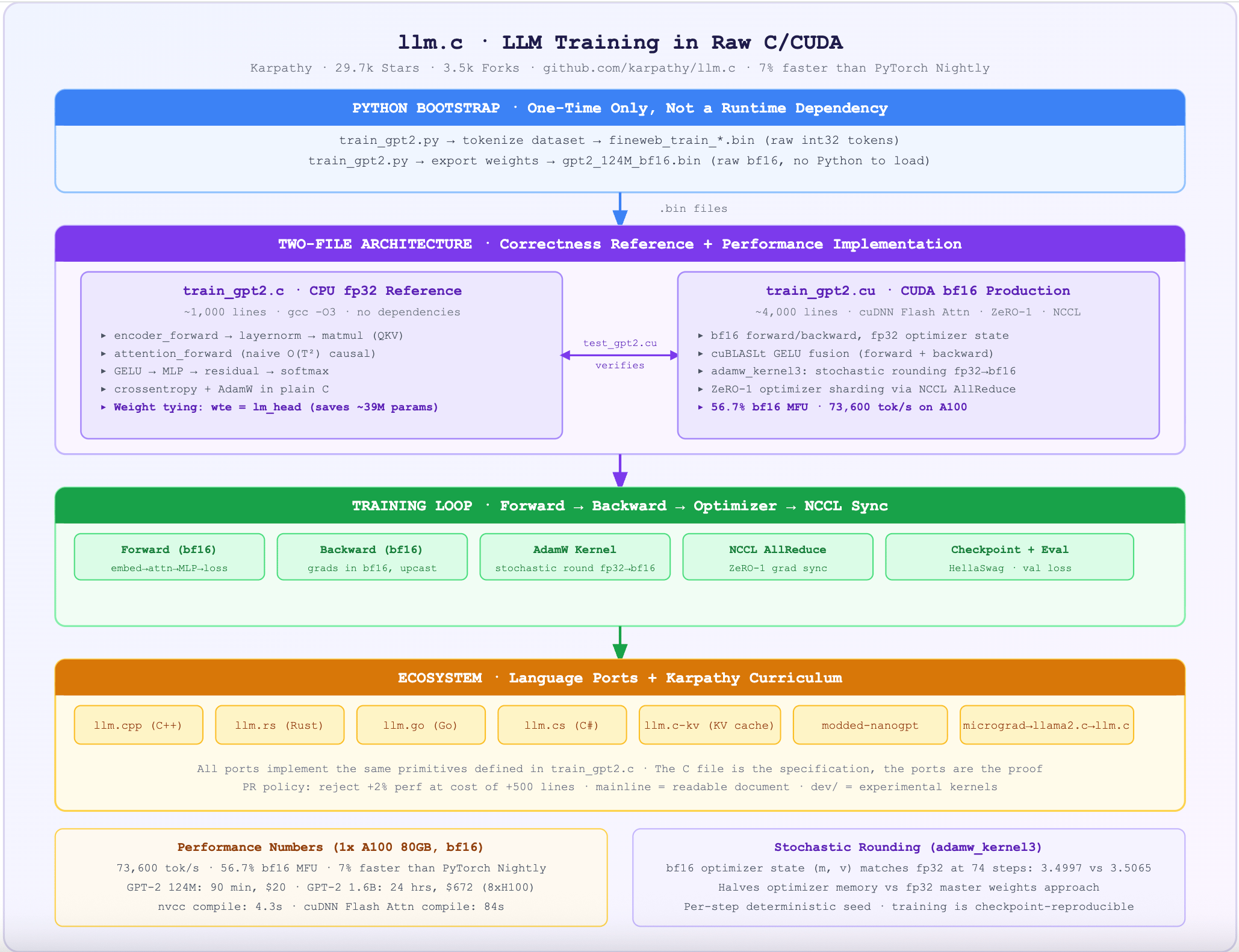

The ML community treats PyTorch as load-bearing infrastructure. 245MB of framework, 107MB of cPython, a dependency graph that takes minutes to install and seconds to import. llm.c asks a direct question: what is actually necessary to train a large language model, and what is just framework overhead? The answer is a single C file, a single CUDA file, and a training loop that outpaces PyTorch Nightly by 7% on GPT-2 (124M) while carrying zero framework dependencies.

This newsletter dissects llm.c not as a curiosity but as a systems document: what the two-file architecture reveals about LLM training primitives, how the bf16 master weight trick achieves mixed precision without a framework, how stochastic rounding closes the gap between fp16 and fp32 optimizer state, and what the ecosystem of language ports (llm.cpp, llm.rs, llm.go, llm.cs) tells us about which parts of the stack are truly portable.

What It Actually Does

llm.c by Andrej Karpathy is LLM pretraining in raw C and CUDA, with no Python runtime, no PyTorch, no autograd engine. The repo has 29,700 GitHub stars and 3,500 forks. The Hacker News post hit 1,050 points on launch day.

Two files define the system:

train_gpt2.c: the CPU reference implementation. ~1,000 lines of clean fp32 C. Complete forward pass, backward pass, AdamW optimizer, data loader. Compiles withgcc -O3, runs immediately, exactly matches PyTorch reference output.train_gpt2.cu: the production CUDA implementation. ~4,000 lines. Mixed precision bf16 training, cuDNN Flash Attention, ZeRO-1 optimizer sharding across multiple GPUs via NCCL, stochastic rounding for optimizer state, fused AdamW kernel. Currently 7% faster than PyTorch Nightly on a single A100.

The repo explicitly refuses ports to other languages. Community forks cover C++ (llm.cpp), Rust (llm.rs), Go (llm.go), and C# (llm.cs). All of them port the same primitives, which is itself a signal: the core training loop is a small, stable set of operations.

The current scope is reproducing the GPT-2 and GPT-3 model series, from 124M to 1.6B parameters. GPT-2 (124M) can be reproduced in 90 minutes for approximately $20 in cloud GPU cost on a single A100.

The Architecture

Focus on the two-file split. train_gpt2.c is the proof that the algorithm is correct. train_gpt2.cu is the proof that the algorithm is fast. The C file is the specification document for every language port in the ecosystem.

The Code

Snippet One: The Complete CPU Forward Pass Structure (train_gpt2.c)

// The entire GPT-2 forward pass in C, fp32. No framework. No autograd.

// This function is called both for training (targets != NULL) and inference.

void gpt2_forward(GPT2 *model, int* inputs, int* targets, size_t B, size_t T) {

// validate inputs

if (model->params_memory == NULL) {

printf("Error: model was not initialized properly.\n"); exit(1);

}

int V = model->config.vocab_size;

int Vp = model->config.padded_vocab_size; // ← padded for matmul alignment

int L = model->config.num_layers;

int NH = model->config.num_heads;

int C = model->config.channels;

// convenience shortcuts into the params struct

ParameterTensors params = model->params;

ActivationTensors acts = model->acts;

float* residual;

// forward pass: tokens → embedding → L transformer blocks → logits

encoder_forward(acts.encoded, inputs, params.wte, params.wpe, B, T, C);

// ← THIS is the model: a loop over L identical transformer blocks

for (int l = 0; l < L; l++) {

residual = l == 0 ? acts.encoded : acts.residual3 + (l-1) * B * T * C;

// pointers into parameter memory for this layer

float* l_ln1w = params.ln1w + l * C;

float* l_ln1b = params.ln1b + l * C;

float* l_qkvw = params.qkvw + l * 3*C * C; // Q, K, V projection fused

float* l_qkvb = params.qkvb + l * 3*C;

float* l_attprojw = params.attprojw + l * C * C;

float* l_attprojb = params.attprojb + l * C;

float* l_ln2w = params.ln2w + l * C;

float* l_ln2b = params.ln2b + l * C;

float* l_fcw = params.fcw + l * 4*C * C; // MLP expand 4x

float* l_fcb = params.fcb + l * 4*C;

float* l_fcprojw = params.fcprojw + l * C * 4*C; // MLP contract

float* l_fcprojb = params.fcprojb + l * C;

// pointers into activation memory for this layer

float* l_ln1 = acts.ln1 + l * B * T * C;

float* l_qkv = acts.qkv + l * B * T * 3*C;

float* l_atty = acts.atty + l * B * T * C;

float* l_preatt = acts.preatt + l * B * NH * T * T;

float* l_att = acts.att + l * B * NH * T * T;

float* l_attproj = acts.attproj + l * B * T * C;

float* l_residual2 = acts.residual2 + l * B * T * C;

float* l_ln2 = acts.ln2 + l * B * T * C;

float* l_fch = acts.fch + l * B * T * 4*C;

float* l_fch_gelu = acts.fch_gelu + l * B * T * 4*C;

float* l_residual3 = acts.residual3 + l * B * T * C;

// now do the forward pass

layernorm_forward(l_ln1, acts.ln1_mean + l*B*T, acts.ln1_rstd + l*B*T,

residual, l_ln1w, l_ln1b, B, T, C);

matmul_forward(l_qkv, l_ln1, l_qkvw, l_qkvb, B, T, C, 3*C);

attention_forward(l_atty, l_preatt, l_att, l_qkv, B, T, C, NH);

matmul_forward(l_attproj, l_atty, l_attprojw, l_attprojb, B, T, C, C);

residual_forward(l_residual2, residual, l_attproj, B*T*C);

layernorm_forward(l_ln2, acts.ln2_mean + l*B*T, acts.ln2_rstd + l*B*T,

l_residual2, l_ln2w, l_ln2b, B, T, C);

matmul_forward(l_fch, l_ln2, l_fcw, l_fcb, B, T, C, 4*C);

gelu_forward(l_fch_gelu, l_fch, B*T*4*C);

matmul_forward(l_residual3, l_fch_gelu, l_fcprojw, l_fcprojb, B, T, 4*C, C);

residual_forward(l_residual3, l_residual3, l_residual2, B*T*C);

}

residual = acts.residual3 + (L-1) * B * T * C;

layernorm_forward(acts.lnf, acts.lnf_mean, acts.lnf_rstd, residual,

params.lnfw, params.lnfb, B, T, C);

// ← weight tying: wte is used for both token embedding AND the final classifier

// this saves V*C parameters (50257 * 768 = ~39M for GPT-2 124M)

matmul_forward(acts.logits, acts.lnf, params.wte, NULL, B, T, C, Vp);

softmax_forward(acts.probs, acts.logits, B, T, V, Vp);

// only compute loss if targets are provided (training, not inference)

if (targets != NULL) {

crossentropy_forward(model->mean_loss, acts.probs, targets, B, T, Vp);

}

}

Every LLM training primitive is visible in order: embedding, L repeated transformer blocks (pre-LN, fused QKV, causal self-attention, MLP with 4x expansion, GELU, residual), final layernorm, classifier with weight tying. No framework magic. This is the algorithm.

Snippet Two: The Fused AdamW Kernel with Stochastic Rounding (train_gpt2.cu)

// The fused AdamW kernel. This is where mixed precision training actually happens.

// Three precision types in one kernel: bf16 params, bf16 grads, fp32 optimizer state.

__global__ void adamw_kernel3(floatX* params_memory, // bf16 on GPU

floatX* grads_memory, // bf16 on GPU

float* m_memory, // fp32 optimizer state (momentum)

float* v_memory, // fp32 optimizer state (variance)

size_t num_parameters,

float learning_rate, float beta1, float beta2,

float beta1_correction, float beta2_correction,

float eps, float weight_decay,

unsigned int seed) { // ← seed for stochastic rounding

int i = blockIdx.x * blockDim.x + threadIdx.x;

if (i >= num_parameters) { return; }

// load bf16 param and grad, upcast to float for arithmetic

// ← THIS is the trick: all optimizer math is in fp32, even if weights live in bf16

float grad = (float)grads_memory[i];

float param = (float)params_memory[i];

float m = m_memory[i];

float v = v_memory[i];

// AdamW update in fp32 precision

m = lerp(grad, m, beta1); // momentum update

v = lerp(grad*grad, v, beta2); // variance update

m_memory[i] = m; // write back fp32 optimizer state

v_memory[i] = v;

// bias-corrected estimates

float m_hat = m / beta1_correction;

float v_hat = v / beta2_correction;

// weight decay + adaptive gradient

param -= learning_rate * (m_hat / (sqrtf(v_hat) + eps) + weight_decay * param);

// ← STOCHASTIC ROUNDING: instead of deterministic round-to-nearest when

// casting fp32 → bf16, we add a small random value first.

// This prevents systematic bias accumulation from repeated rounding errors.

// The seed is per-step, deterministic: same seed = reproducible training.

unsigned int random = Get2dNoiseUint(threadIdx.x, blockIdx.x, seed);

params_memory[i] = (floatX)stochastic_rounding(param, random);

}

The stochastic rounding is the underappreciated innovation. Standard round-to-nearest-even when casting fp32 to bf16 introduces a systematic bias: small gradient updates that fall below the bf16 resolution get rounded to zero every time. Stochastic rounding replaces this with a probabilistic round that is unbiased in expectation. In practice: bf16 optimizer state with stochastic rounding matches fp32 training loss on GPT-2 at 74 steps (3.499 vs 3.506), saving the memory cost of fp32 master weights entirely.

It In Action: End-to-End Worked Example

Scenario: Reproduce GPT-2 (124M) from scratch on one A100, training on FineWeb-10B tokens.

Step 1: Bootstrap (Python, one-time only)

# Download and tokenize FineWeb-10B. Python is only needed here.

python dev/data/fineweb.py --shard_size=1e8

# Output: dev/data/fineweb10B/fineweb_train_*.bin (int32 token shards)

# dev/data/fineweb10B/fineweb_val_*.bin

# Export GPT-2 weights from HuggingFace to a raw .bin file

python train_gpt2.py --write_tensors=1 --num_iterations=0

# Output: gpt2_124M_bf16.bin (raw bf16 weights, no Python needed to load)

Step 2: Compile and train (pure C/CUDA, no Python)

# Compile with cuDNN Flash Attention

# ← -O3 gives ~15% throughput improvement over -O2 on the CUDA path

make train_gpt2cu USE_CUDNN=1

# Launch training on single A100 80GB

./train_gpt2cu \

-i "dev/data/fineweb10B/fineweb_train_*.bin" \

-j "dev/data/fineweb10B/fineweb_val_*.bin" \

-o log124M \

-e "d12" \ # 124M model (12-layer, 768-dim)

-b 16 -t 1024 \ # batch size 16, sequence length 1024

-d 524288 \ # total batch size in tokens (512K)

-r 1 \ # recompute activations (saves memory)

-z 1 \ # ZeRO-1 optimizer sharding

-c 0.1 \ # gradient clipping at 1.0

-l 0.0006 \ # peak learning rate

-u 700 \ # warmup steps

-n 5000 \ # checkpoint every 5000 steps

-v 250 \ # validate every 250 steps

-h 1 # hellaswag eval enabled

Step 3: Training log output (real numbers)

step 19553/19560 | loss 3.339984 (+1.07z) | norm 0.2221 | lr 2.68e-10 | 7115ms | 56.7% bf16 MFU | 73,666 tok/s

step 19554/19560 | loss 3.297637 (-0.09z) | norm 0.2207 | lr 1.97e-10 | 7114ms | 56.7% bf16 MFU | 73,667 tok/s

step 19560/19560 | loss 3.291130 (-0.28z) | norm 0.2171 | lr 1.43e-10 | 7115ms | 56.7% bf16 MFU | 73,668 tok/s

Real training numbers (1x A100 80GB SXM, bf16, cuDNN Flash Attention):

Throughput: ~73,600 tok/s at 56.7% MFU (Model FLOP Utilization)

Total training time: ~90 minutes for GPT-2 (124M) on FineWeb-10B

Total cost: ~$20 on Lambda Labs A100 instance

Final validation loss: ~3.28 (matches OpenAI's GPT-2 124M release)

PyTorch Nightly comparison: llm.c is ~7% faster (167K tok/s vs 150K tok/s at vocab size 50257)

Step 4: Verify correctness against PyTorch

# The C implementation passes this test against the PyTorch reference.

# 10 Adam steps, all losses must match within tolerance.

make test_gpt2 && ./test_gpt2

# Output: overall okay

# This is how Karpathy ensures the hand-rolled gradients are correct.

Why This Design Works, and What It Trades Away

The two-file architecture is correct because it separates two different problems: correctness verification and performance optimization. train_gpt2.c is the ground truth. Every optimization in train_gpt2.cu must produce identical (within tolerance) results to the C reference. This is enforced by test_gpt2.cu, which runs 10 training steps of both implementations and checks that losses match. Without the C reference, there is no way to verify that a CUDA optimization did not silently corrupt the gradients.

The PR policy is the architectural decision that deserves the most attention. Karpathy explicitly states that a pull request improving performance by 2% at the cost of 500 lines of complex C will be rejected. The mainline must remain readable. The dev/cuda/ folder exists for experimental kernels with higher complexity tolerance. This is the correct design for an educational and research codebase: the mainline is the document, not the benchmark.

The stochastic rounding implementation in adamw_kernel3 is the single most technically sophisticated decision in the codebase. Mixed precision training typically keeps fp32 master weights alongside bf16 training weights, doubling the optimizer memory cost. Stochastic rounding allows bf16 optimizer state to match fp32 training quality. The random seed is per-step and deterministic, meaning training is reproducible from checkpoints. The mechanism: before casting fp32 param to bf16, add a uniformly distributed random value in the range of bf16's least significant bit. Round-to-nearest then becomes statistically unbiased.

What llm.c trades away:

Architecture flexibility. The codebase is hardcoded to GPT-2 and GPT-3 architecture variants. There is no module registry, no layer abstraction, no config-driven architecture construction. Adding a new architecture requires editing the C code directly. This is intentional: the goal is a minimal, verified implementation of one family of models, not a general framework.

Non-NVIDIA hardware. llm.c is CUDA only. AMD, Apple Silicon, and other GPU targets require separate forks. The community has produced these (tinygrad, gpu.cpp) but they are separate codebases. The CUDA dependency is explicit and unapologetic.

Technical Moats

The correctness testing discipline. Every CUDA optimization is verified against the C reference implementation. The test harness compares logits, losses, and gradients across 10 training steps with explicit tolerances. This is harder to replicate than any individual kernel optimization. It is the reason the community trusts llm.c to teach the real algorithm, not a PyTorch approximation of it.

The Karpathy curriculum. llm.c sits at the end of a pedagogical arc: micrograd (scalar autograd) → nanoGPT (minimal Python GPT training) → llama2.c (inference in C) → llm.c (training in C/CUDA). Each repo is self-contained and pedagogically complete. The community that formed around "Zero to Hero" videos is already primed to read the code. That installed base of engaged learners is not a technical artifact.

The language-port ecosystem signal. The existence of llm.cpp, llm.rs, llm.go, and llm.cs is not a dilution of llm.c's value. It is a validation of the design: the core training primitives are so clearly defined in the C reference that porting them to other languages is a straightforward exercise. Any codebase that generates this many independent ports has succeeded as a specification.

Insights

Insight One: llm.c is not a competitor to PyTorch. It is a proof that PyTorch's performance overhead is a choice, not a physical constraint.

The common narrative is that PyTorch is the unavoidable cost of doing LLM training. llm.c shows this is false. A 7% throughput improvement over PyTorch Nightly on the same hardware, with zero framework overhead, proves that the framework itself has non-trivial cost. This is not a criticism of PyTorch, which provides enormous value through autograd, distributed training primitives, and ecosystem integration. The insight is that the performance headroom exists, that the kernel-level implementation is accessible to small teams, and that framework overhead is a legitimate optimization target for production training runs at scale. At 100-GPU training runs, 7% faster means 7% less GPU cost. That is not academic.

Insight Two: The "no ports" policy and the "readability over performance" PR policy are the same decision, and most engineers reading the repo miss this.

Karpathy's decision to keep llm.c CUDA-only and to reject complexity-adding PRs is not conservatism. It is a deliberate choice about what the codebase is for. llm.c is a specification and a teaching tool. Allowing ports would mean maintaining multiple implementations. Allowing complexity-adding PRs would mean the mainline stops being readable as a document of the algorithm. The dev/ folder absorbs experimental kernels precisely so the mainline stays clean. The language ports (llm.rs, llm.go, etc.) exist precisely because the mainline is simple enough to port. The "no ports" policy enables the ports. This is a design insight that most maintainers of educational codebases fail to internalize.

Takeaway

Training GPT-2 (124M) to match OpenAI's released model quality takes 90 minutes, costs $20, and requires zero Python at inference time, using a codebase that fits in ~4,000 lines of C/CUDA. The entire forward pass, backward pass, AdamW optimizer, and data loader are visible, readable, and individually verifiable against a 1,000-line fp32 C reference. There is no black box.

The comparison point: PyTorch itself is 245MB of compiled code. The llm.c binary compiles in under 5 seconds with make. This is not a demonstration of C being better than Python. It is a demonstration of what happens when you remove every layer of abstraction that is not strictly necessary to train the model.

TL;DR For Engineers

train_gpt2.c(~1,000 lines, CPU fp32) is the correctness reference.train_gpt2.cu(~4,000 lines, CUDA bf16) is the performance implementation. Every optimization in .cu must match .c output. This two-file split is the architecture.llm.c is 7% faster than PyTorch Nightly on GPT-2 (124M) single-GPU training. At ~167K tok/s vs ~150K tok/s on one A100, and 56.7% bf16 MFU.

Stochastic rounding in

adamw_kernel3enables bf16 optimizer state (m, v) without fp32 master weights, matching fp32 training loss. This halves optimizer memory cost vs. the standard mixed-precision approach.The PR policy (reject +2% perf if it costs +500 lines) is a design decision, not a maintenance preference. The mainline is a specification document. The dev/ folder is the experimental zone.

GPT-2 (124M) reproduces in 90 minutes for $20 on one A100. GPT-2 (1.6B) reproduces in 24 hours for $672 on one 8xH100 node.

The Right Frame Is Not "C Is Better Than Python." It Is "What Is Actually Necessary?"

llm.c answers that question precisely. Forward pass: ~20 primitive operations (embedding, layernorm, matmul, attention, GELU, residual, softmax, cross-entropy). Backward pass: the same 20, in reverse. Optimizer: AdamW in ~20 lines of C. Data loader: read int32 tokens from binary shards. That is the complete algorithm. Everything else is framework. When you see that the complete algorithm fits in 1,000 lines of readable C, you start asking different questions about the production ML stacks your team runs on.

References

llm.c GitHub Repository, 29.7k stars, 3.5k forks, Karpathy, 2024

Reproducing GPT-2 (124M) in llm.c in 90 minutes for $20, Discussion #481

State of the Union May 3, 2024: 7% faster than PyTorch, Discussion #344

Reproducing GPT-2 (1.6B): 8xH100, 24 hours, $672, Discussion #677

nanoGPT, 57k stars, Karpathy's Python predecessor

llama2.c, 19.4k stars, Karpathy's C inference predecessor

micrograd, 15.6k stars, scalar autograd engine

llm.cpp, C++ port

llm.rs, Rust port

llm.go, Go port

llm.cs, C# port

modded-nanogpt, speed-focused nanoGPT fork

Attention Is All You Need, arXiv:1706.03762, Vaswani et al., 2017

Language Models are Unsupervised Multitask Learners (GPT-2), Radford et al., 2019

llm.c (Karpathy, 2024) trains GPT-2 in raw C/CUDA with no PyTorch or Python runtime: a ~1,000-line CPU fp32 reference file (train_gpt2.c) defines the algorithm, a ~4,000-line CUDA file (train_gpt2.cu) implements it with bf16 mixed precision, cuDNN Flash Attention, ZeRO-1 optimizer sharding, and stochastic rounding, running 7% faster than PyTorch Nightly at 56.7% bf16 MFU on a single A100. The correctness discipline (every CUDA optimization verified against the C reference via test_gpt2.cu) and the PR policy (reject complexity-adding changes that cost readability) make llm.c as much a specification document as a training system, enabling a community of language ports (C++, Rust, Go, C#) that all implement the same stable primitive set.

Sponsored Ad

If you enjoy practical AI insights, check out SnackOnAI and support the newsletter by subscribing, sharing, and exploring our sponsored ad — it helps us keep building and delivering value 🚀

Stop babysitting your coding agents

Agents can generate code. Getting it right for your system, team conventions, and past decisions is the hard part – you end up wasting time and tokens in correction loops.

MCPs give agents access to information but not understanding. The teams pulling ahead use a context engine to give agents exactly what they need.

Join us April 23 (FREE) to see:

Where teams get stuck on the AI maturity curve

How a context engine solves for quality, efficiency, and cost

Live demo: the same coding task with and without a context engine