In our previous post, we explored ClawMax and the idea that the missing control plane for multi-agent systems is fundamentally a dashboard problem.

In this blog, we shift focus to OpenHands, diving into how it approaches agent workflows, developer interaction, and what it means for building more practical, hands-on AI systems.

Read the earlier piece here: ClawMax: The Missing Control Plane For Multi-Agent Systems

Today's AI coding tools Copilot, Claude, ChatGPT are powerful, but they share a fundamental limitation: they live outside your workflow. You paste code in, get a suggestion back, then manually apply it, run the tests, read the failures, paste those back in, and loop.

The AI never directly touches your repository. It never runs your build. It never sees the test failure it caused. Every round-trip between you and the model is friction that slows down engineering.

This model breaks down completely on complex, multi-step tasks. Ask an AI to 'add rate limiting to the payments API, write integration tests, and make sure nothing in the auth module breaks' and you'll spend more time managing the AI's output than writing code yourself.

What the industry actually needs is an agent that can own the task end-to-end: plan the steps, execute them in a real environment, read the results, fix its own mistakes, and deliver a working output. That's exactly what OpenHands is built to do.

OpenHands: An Autonomous AI Software Engineer

OpenHands (formerly OpenDevin) is an open-source platform for autonomous software engineering agents, built by All Hands AI and a growing open-source community.

It was first released in early 2024 and quickly became one of the most-starred AI engineering repositories on GitHub, reaching 40,000+ stars within months of launch.

The core thesis give an AI agent a real Linux environment, a real terminal, real file system access, and real browser capability then give it a goal and let it work.

Unlike narrow tools that wrap a single LLM call, OpenHands provides a full agentic runtime. Agents can use any LLM as their reasoning core Claude 3.5 Sonnet, GPT-4o, Llama 3, Mistral via a unified interface.

The platform handles the scaffolding: tool definitions, environment isolation, action history, memory, and human-in-the-loop approval checkpoints. Teams can deploy it locally via Docker, on-premise, or as a managed cloud service.

OpenHands vs. traditional AI coding tools at a glance:

GitHub Copilot → autocomplete inside your editor. You apply every suggestion manually.

Claude/ChatGPT → conversational. You copy-paste in, copy-paste suggestions out.

OpenHands → agentic. It has file system access, a terminal, and a browser. It executes, reads results, and self-corrects

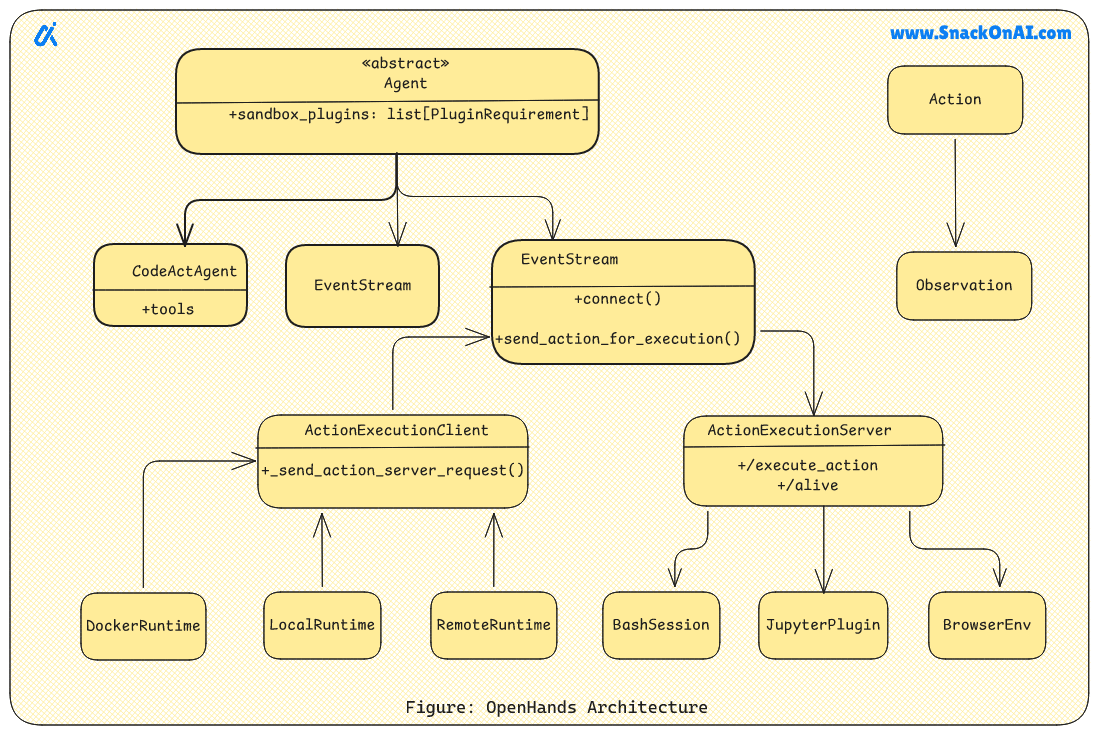

Architecture: How OpenHands Actually Works

OpenHands is built around three layers. The first is the Agent Core the LLM-powered reasoning engine that receives a goal, breaks it into steps, decides which tools to use, and interprets results.

It maintains an action history and a working memory of the current task state. The second is the Runtime Sandbox a Docker container (or cloud VM) where every action is executed in isolation.

The agent writes files here, runs commands here, installs packages here. Nothing touches the host machine without explicit mount permissions. The third is the Tool Layer a set of capabilities the agent can invoke: bash shell, file read/write, web browser, GitHub API, and custom plugins.

The key architectural decision is the separation between the agent's reasoning (which happens in the LLM) and its execution (which happens in the sandbox). This means the LLM never needs to 'imagine' what a command output looks like it gets the real output back as its next input. The agentic loop is: think → act → observe → repeat, until the goal is achieved or the agent asks for human input.

Getting Started With OpenHands

OpenHands runs inside Docker. The container bundles the runtime, sandbox, and web UI. The only requirement on your host machine is Docker itself and an API key for your chosen LLM. The entire setup is a single docker run command.

bash installation & first run

# Pull and run OpenHands (latest stable release)

docker run -it \

--pull=always \

-e SANDBOX_USER_ID=$(id -u) \

-e LLM_MODEL=claude-3-5-sonnet-20241022 \

-e LLM_API_KEY=sk-ant-xxxxxxxxxxxxxxxx \

-v /var/run/docker.sock:/var/run/docker.sock \

-v ~/.openhands-state:/.openhands-state \

-v $(pwd):/workspace \

-p 3000:3000 \

--add-host host.docker.internal:host-gateway \

ghcr.io/all-hands-ai/openhands:0.15

# Access the web UI

open http://localhost:3000

# Or use the CLI directly (headless mode)

docker exec openhands python -m openhands.core.main \

-t 'Fix all TypeScript strict mode errors in src/' \

--workspace /workspaceThe -v $(pwd):/workspace mount is critical

This is what gives OpenHands access to your actual project files.

The agent reads and writes inside /workspace inside the container which maps directly to your current directory on disk.

Without this mount, the agent works only on files it creates itself inside the ephemeral container.

Fixing A TypeScript Project With OpenHands

Here's a real Example. Your team has a Node.js Express API that was originally written in JavaScript, later incrementally converted to TypeScript with strict: false. The new mandate: enable strict mode, fix all type errors, and ensure the test suite still passes across 23 files. This is exactly the kind of task that's tedious for a human (hours of mechanical fixes) but well-suited for an autonomous agent.

Step 1 : Give OpenHands the Goal

openhands prompt

# Enter into the OpenHands web UI or CLI:

> Enable TypeScript strict mode in this project. Steps required:

> 1. Set 'strict': true in tsconfig.json

> 2. Run tsc --noEmit to find all type errors

> 3. Fix every error do not use 'any' as a shortcut

> 4. After fixes, run the full test suite (npm test)

> 5. All tests must pass before you finish

> 6. Summarize every change you made in a CHANGES.md fileStep 2 : What the Agent Does (Actual Execution Log)

agent execution log

[1] file_read: tsconfig.json

→ strict: false found. Setting to true.

[2] file_write: tsconfig.json

→ 'strict': true

[3] bash: npx tsc --noEmit 2>&1 | head -60

→ 47 errors across 23 files

[4] bash: npx tsc --noEmit 2>&1 | grep 'error TS' | sort | uniq -c | sort -rn

→ 18x TS2345 (argument type mismatch)

→ 14x TS7006 (parameter implicitly has 'any' type)

→ 9x TS2532 (object possibly undefined)

→ 6x TS2339 (property does not exist on type)

# Agent prioritizes: fix highest-frequency errors first

[5] file_read: src/middleware/auth.ts

[6] file_write: src/middleware/auth.ts

→ Added explicit param types to 3 middleware functions

→ Replaced implicit any with Request, Response, NextFunction

[7] file_read: src/services/paymentService.ts

[8] file_write: src/services/paymentService.ts

→ Added optional chaining for 4 possibly-undefined accessors

→ Narrowed union type with type guard in processRefund()

# ... (continues across 23 files) ...

[31] bash: npx tsc --noEmit

→ 0 errors ✓

[32] bash: npm test

→ FAIL src/services/paymentService.test.ts

→ TypeError: Cannot read properties of undefined (reading 'amount')

# Agent reads the failure, traces to root cause

[33] file_read: src/services/paymentService.test.ts

[34] file_write: src/services/paymentService.test.ts

→ Test fixture was missing required 'currency' field (now enforced by strict types)

[35] bash: npm test

→ 64 passed, 0 failed ✓

[36] file_write: CHANGES.md

→ Summary: 23 files modified, 47 errors resolved, 0 'any' shortcuts usedWhat made this task hard without an agent:

Fixing 47 TypeScript strict errors across 23 files is ~3–4 hours of mechanical work for a developer.

The agent completed it in 11 minutes of wall-clock time across 36 tool calls.

Crucially: the agent also caught and fixed a test fixture bug that a find-replace approach would have missed entirely.

The self-correction loop (run tests → read failure → fix → re-run) is the difference-maker.

Automating GitHub Issues With OpenHands

OpenHands ships with a GitHub Actions integration that can automatically triage and fix issues when they are labeled. This turns OpenHands into a background engineer that picks up labeled issues and opens PRs without human intervention a genuine force multiplier for small teams.

yaml GitHub Actions integration

# .github/workflows/openhands-resolver.yml

name: OpenHands Auto-Resolver

on:

issues:

types: [labeled]

jobs:

resolve:

if: github.event.label.name == 'fix-with-ai'

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Run OpenHands resolver

uses: all-hands-ai/[email protected]

with:

issue-number: ${{ github.event.issue.number }}

llm-model: claude-3-5-sonnet-20241022

llm-api-key: ${{ secrets.ANTHROPIC_API_KEY }}

# If OpenHands produces a fix, it automatically:

# 1. Commits the changes to a new branch

# 2. Opens a PR with a summary of what it changed and why

# 3. Links the PR back to the original issue

# Human reviews and merges agent does the codingKey Use Cases Of OpenHands

Here are the key OpenHands use cases:

1. Automated Test Generation Point OpenHands at any untested module and it will analyze the logic, write pytest or Jest test cases, run them, and fix failures delivering a passing test suite without you writing a single test manually.

2. Legacy Code Migration Migrating from JavaScript to TypeScript, Python 2 to 3, or an old framework to a new one involves hundreds of mechanical changes. OpenHands handles the full sweep across all files, runs the build, and self-corrects errors it introduces.

3. Dependency Upgrades Upgrading major versions (e.g., React 17 → 18, Django 3 → 4) often breaks APIs across dozens of files. OpenHands reads changelogs, applies fixes project-wide, and verifies the build passes before finishing.

4. Bug Fixing from GitHub Issues Via the GitHub Actions integration, OpenHands reads a labeled issue, reproduces the bug in its sandbox, patches the code, and opens a PR no human writes a line of code.

Why OpenHands Matters

OpenHands is the bridge between AI as a "thinker" and AI as a "doer." It acknowledges that modern software development involves more than just writing syntax it involves environment setup, testing, and debugging. By moving the AI into a sandbox where it can actually use a terminal, OpenHands empowers developers to move from micro-managing code to macro-managing systems.

OpenHands transforms the developer's role from a manual builder to an orchestrator, turning a high-level vision into a fully tested codebase with an autonomous, command-line-driven engineer that handles the heavy lifting inside a secure sandbox.

References

Github Repository

Documentation

OpenHands enables autonomous, human-guided AI agents to plan, execute, test, and refine real software projects end-to-end, transforming AI from a passive assistant into an active engineering collaborator.

Sponsored Ad

When it all clicks.

Why does business news feel like it’s written for people who already get it?

Morning Brew changes that.

It’s a free newsletter that breaks down what’s going on in business, finance, and tech — clearly, quickly, and with enough personality to keep things interesting. The result? You don’t just skim headlines. You actually understand what’s going on.

Try it yourself and join over 4 million professionals reading daily.