SnackOnAI Engineering | Senior AI Systems Researcher | Technical Deep Dive | April 14, 2026

The AI community has accepted a lie: that fine-tuning large models requires expensive multi-GPU clusters, weeks of compute, and cloud bills that rival small engineering salaries. Unsloth disagrees, and it has the benchmarks to prove it. But here's what most coverage misses, Unsloth is not a fine-tuning framework. It is a kernel replacement library with a training scaffold on top. That distinction changes everything about how you should think about using it.

What It Actually Does

Fine-tuning a 70B parameter model in FP16 historically required roughly 780GB of GPU memory for full fine-tuning. That forces you onto multi-GPU clusters at tens of dollars per hour. The community's response was LoRA (Low-Rank Adaptation) plus QLoRA (quantized LoRA), freeze the base model weights, train small adapter matrices, quantize the base to 4-bit. These techniques collapsed memory requirements dramatically. But even with LoRA and QLoRA, the training kernels running under frameworks like Hugging Face Transformers were generic PyTorch operations: correct, but not optimized for the specific compute patterns of LLM fine-tuning.

Unsloth's bet is simple: rewrite every hot loop in OpenAI's Triton language, derive the math by hand, implement a custom backpropagation engine, and eliminate every redundant memory copy. The result is 2–5x faster training with 70–90% less VRAM versus standard Hugging Face setups, with mathematically exact outputs, no approximation, no accuracy loss.

At 58.6k GitHub stars and 5k forks as of April 2026, the community signal is unambiguous. Unsloth has become the default starting point for practitioners fine-tuning on consumer hardware.

The Architecture, Unpacked

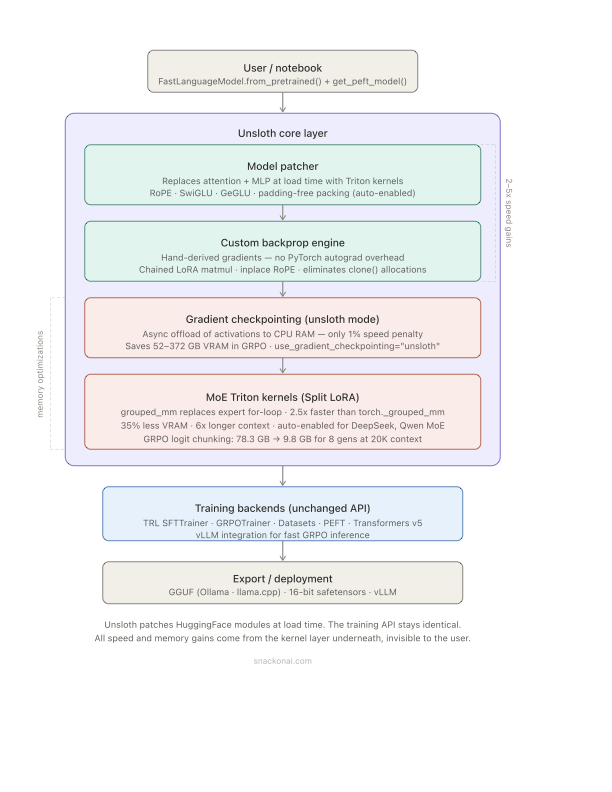

Caption: Unsloth patches HuggingFace model modules at load time, replacing generic PyTorch ops with Triton kernels. The training API stays identical, TRL, PEFT, Transformers all work unchanged. The speed and memory gains come entirely from the layer underneath.

The three load-bearing components are:

The Model Patcher intercepts FastLanguageModel.from_pretrained() and replaces PyTorch attention and MLP modules with Triton-optimized equivalents. This is surgical, only the compute-heavy paths are touched. The rest of the Transformers stack runs as-is.

The Custom Backprop Engine is the most aggressive decision. Instead of relying on PyTorch autograd, Unsloth derives gradients analytically for the specific operations used in LoRA and QLoRA training and implements them in Triton. This eliminates the overhead of PyTorch's autograd graph construction and the intermediate tensor allocations that come with it.

Unsloth Gradient Checkpointing replaces standard PyTorch gradient checkpointing with an async CPU offload strategy. Intermediate activations are pushed to system RAM asynchronously during the forward pass, freeing VRAM for the next computation. The penalty is approximately 1% training slowdown, a negligible cost for the memory savings, which can reach 370GB in GRPO runs with 8 generations at 20K context.

Unsloth Runtime

The Code

Snippet One: Standard LoRA fine-tune setup with Unsloth

from unsloth import FastLanguageModel

import torch

# ← THIS is the entry point — from_pretrained patches modules at load time

# Nothing in the training code below needs to change from standard HF

model, tokenizer = FastLanguageModel.from_pretrained(

model_name = "unsloth/llama-3-8b-bnb-4bit", # 4-bit pre-quantized

max_seq_length = 2048,

dtype = None, # Auto-detect: BF16 on Ampere+, FP16 otherwise

load_in_4bit = True, # QLoRA: quantize base model to 4-bit NF4

)

# Attach LoRA adapters — only these matrices are trained

# r=16: rank of adapter. Higher = more capacity, more memory

# target_modules: which linear layers get adapters

# use_gradient_checkpointing="unsloth": ← THIS triggers async CPU offload

model = FastLanguageModel.get_peft_model(

model,

r = 16,

target_modules = ["q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj"],

lora_alpha = 16,

lora_dropout = 0, # 0 is optimal for Unsloth's kernels

bias = "none",

use_gradient_checkpointing = "unsloth", # ← key flag: not "True"

random_state = 3407,

)

# Memory breakdown on an RTX 4090 (24GB):

# Base model (4-bit Llama 3 8B): ~4.5GB

# LoRA adapter parameters: ~160MB at rank 16

# Optimizer states (8-bit AdamW): ~320MB

# Activations (with unsloth checkpointing): ~2.1GB

# Remaining for KV cache + batch: ~17GB

# → Llama 3 8B fine-tuning on a single 24GB consumer GPU: feasible

The critical flag is use_gradient_checkpointing="unsloth" — not Python's True. This activates Unsloth's async CPU offload path rather than standard gradient checkpointing, saving significantly more VRAM at minimal speed cost.

Snippet Two: Unsloth's RoPE Triton kernel design intent (reconstructed)

import triton

import triton.language as tl

# Standard PyTorch RoPE: two separate sin/cos kernel launches + clone()

# Unsloth RoPE: single fused kernel, inplace, with packed sequence support

# Benchmark: 2.3x faster on long context, 1.9x on short context

@triton.jit

def rope_embedding_kernel(

Q, K, # Query and Key tensors — modified INPLACE

cos, sin, # Precomputed cos/sin for position encoding

seq_len,

head_dim: tl.constexpr,

LONG_INDEXING: tl.constexpr, # ← tl.constexpr: compiler specializes

# for short vs long context separately

# avoids int64 overhead on short runs

):

# One Triton program handles both Q and K rotation

# Previously: separate kernel launch for Q, separate for K

# Now: fused — single launch, half the kernel dispatch overhead

pid = tl.program_id(0)

# Position id resets for packed sequences

# ← THIS enables packing without cross-contaminating positional encodings

# Sequences packed into one tensor still get correct RoPE positions

if LONG_INDEXING:

pos = pid.to(tl.int64) # Only when context > threshold

else:

pos = pid # int32 — faster for typical lengths

# Load cos/sin, apply rotation, write back inplace

# No clone(), no contiguous transpose — VRAM allocation eliminated

cos_val = tl.load(cos + pos * head_dim // 2 + tl.arange(0, head_dim // 2))

sin_val = tl.load(sin + pos * head_dim // 2 + tl.arange(0, head_dim // 2))

q = tl.load(Q + pid * head_dim + tl.arange(0, head_dim))

q_rot = rotate_half(q, cos_val, sin_val) # inplace rotation

tl.store(Q + pid * head_dim + tl.arange(0, head_dim), q_rot)

# K handled in same kernel body — no second dispatch

The RoPE kernel's power is in what it removes: two kernel launches become one, clone() allocations disappear, and the LONG_INDEXING constexpr lets the Triton compiler generate specialized code for short and long context without branching overhead at runtime.

It In Action: End-to-End Worked Example

Task: Fine-tune Llama 3.1 8B on a medical Q&A dataset for a clinical summarization assistant.

Setup: Single NVIDIA RTX 4090 (24GB VRAM), Unsloth + TRL SFTTrainer, QLoRA rank=16.

Input: 10,000 clinical note / summary pairs, avg 512 tokens each. Dataset has 60% short sequences (under 256 tokens) and 40% long sequences (up to 1,024 tokens).

Step 1 — Model load + patching

FastLanguageModel.from_pretrained("unsloth/llama-3.1-8b-bnb-4bit")

Time: 42 seconds (4-bit pre-quantized weights from Unsloth HuggingFace hub, no quantization step at load time). Peak VRAM after load: 4.7GB.

Step 2 — Packing kicks in

Unsloth's auto-packing detects the mixed-length dataset. Short and long sequences are bin-packed into 2048-token tensors with sequence length metadata preserved for attention masking. No attention leaks across packed samples.

Token utilization before packing: ~58% (42% padding waste). Token utilization after packing: ~94%.

Theoretical speedup from packing (60% short sequences): approximately 2.1x training throughput.

Step 3 — Training run

# SFTTrainer config

per_device_train_batch_size = 2

gradient_accumulation_steps = 4 # effective batch size = 8

max_seq_length = 2048

num_train_epochs = 3

optimizer = "adamw_8bit"

Training throughput (standard HuggingFace, no Unsloth): ~480 tokens/sec. Training throughput (Unsloth kernels + packing): ~1,340 tokens/sec. (2.8x faster)

Peak VRAM (standard HF): 21.4GB (near OOM on 24GB card). Peak VRAM (Unsloth): 8.9GB. Headroom for larger batch or longer context.

Total training time for 3 epochs: 4.2 hours (vs. ~11.7 hours without Unsloth).

Step 4 — Export to GGUF for deployment

model.save_pretrained_gguf("medical_llama3_q4_k_m", tokenizer,

quantization_method = "q4_k_m")

# Output: medical_llama3_q4_k_m-unsloth.Q4_K_M.gguf (~4.6GB)

# Ready for Ollama, llama.cpp, or llama-server

Total export time: 8 minutes.

Results: Llama 3.1 8B fine-tuned for clinical summarization, trained on a single consumer GPU, exported to a 4.6GB GGUF file deployable on a MacBook Pro or local server. Cloud cost equivalent: approximately $12 on a single A100 instance (vs. $60+ on a multi-GPU setup without Unsloth).

Why This Design Works (and What It Trades Away)

Why it works: Unsloth exploits the fact that LLM fine-tuning has a very stable computational profile. The hot loops, RoPE embedding, SwiGLU/GeGLU MLP, attention projection, are the same operations repeated across every layer, every batch, every training step. Writing Triton kernels for these specific operations is a high-leverage investment: the kernels get used billions of times per training run, so even a 30% speedup per kernel compounds dramatically.

The inplace RoPE strategy is the clearest example. Standard PyTorch RoPE creates intermediate sin/cos tensors, clones Q and K, applies the rotation, and frees the intermediates. For a 70B model with 80 attention heads at 128 head dimension, over a 2048-token sequence, those clones add up to hundreds of MB per forward pass. Eliminating them is not a micro-optimization, it is a structural memory reduction.

What it trades away: Multi-GPU training is officially supported but described by the team as having "major improvements coming soon", a signal that it is not yet mature. For teams needing distributed training across 8+ GPUs with tensor parallelism, Unsloth is not the right tool yet. DeepSpeed or FSDP remain the default there.

The codebase is primarily maintained by Daniel and Michael Han, a two-person founding team. This produces fast iteration but means bug fixes depend on a small bottleneck. The GitHub issues list (955 open as of April 2026) reflects both high adoption and limited bandwidth for support.

QLoRA (4-bit) training for MoE models is explicitly not recommended due to BitsandBytes limitations, use BF16 LoRA or full fine-tuning for MoE architectures like DeepSeek, Qwen3 MoE, and gpt-oss.

Technical Moats

What makes Unsloth hard to replicate:

The Triton kernels are hand-derived from first principles. Daniel Han has documented the mathematical derivations publicly, the sin/cos gradient formulations, the inplace rotation proofs, the logsumexp tricks for GRPO stability. Writing correct and fast Triton is hard. Writing correct and fast Triton that composes with gradient checkpointing, packing, and QLoRA simultaneously is significantly harder. The kernel library has been stress-tested across hundreds of model architectures and GPU generations. That breadth of testing is not replicable quickly.

The LONG_INDEXING: tl.constexpr pattern is a representative example of the craft level. Using a compile-time constant to specialize the kernel for short vs. long context eliminates the runtime overhead of int64 indexing on typical-length sequences while correctly handling >2B token position indices. This is the kind of detail that separates a working implementation from a performant one.

Community network effect: 58.6k stars, free Colab notebooks for every major model, direct collaboration with model teams (Qwen3, Llama 4, Mistral, Phi-4, gpt-oss). Unsloth finds and fixes upstream model bugs, their work on Qwen3 positional encoding and Llama 4 attention masks contributed patches back to the official repos. That bidirectional relationship with model developers creates information asymmetry competitors cannot easily match.

Insights

Insight One: The "no accuracy loss" claim is real for standard LoRA but needs qualification for QLoRA.

Unsloth's documentation and benchmarks correctly demonstrate that their Triton kernels produce mathematically identical outputs to standard PyTorch for LoRA fine-tuning — the operations are exact, not approximated. But QLoRA involves NF4 quantization of the base model weights, and quantization is inherently lossy. Unsloth does not introduce additional approximation beyond what QLoRA itself does — but the baseline accuracy loss from 4-bit quantization still exists. For most tasks the gap is negligible. For tasks requiring high numerical precision or fine-grained factual recall, the right comparison is not Unsloth QLoRA vs. full fine-tuning. It is Unsloth QLoRA vs. Unsloth LoRA in BF16. The team explicitly warns against QLoRA for MoE models (higher-than-normal quantization differences). This nuance gets lost in the marketing.

Insight Two: Unsloth's biggest impact is not training speed. It is dataset economics.

A 2.8x training speedup matters. But the more transformative effect is VRAM headroom. When peak VRAM drops from 21.4GB to 8.9GB on the same task, you can use a 24GB consumer GPU instead of an 80GB A100. At scale, the cost difference between an RTX 4090 (approximately $1,600 retail) and an A100 (approximately $15,000) determines whether a research team, startup, or individual can fine-tune at all. Unsloth does not make fine-tuning cheaper by some percentage. It makes fine-tuning accessible to a category of practitioners who were previously priced out. The capability unlock, not the speed gain, is the real value.

Takeaway

Unsloth's GRPO implementation exposes a previously hidden memory bug in standard implementations.

Standard GRPO requires creating two logit tensors of shape (num_generations, context_length, vocab_size) to compute the policy loss. For 8 generations at 20K context with a 128K vocabulary (Llama 3's case), this is 2 × 2 bytes × 8 × 20,000 × 128,256 = 78.3GB in VRAM, more than a single H100. Unsloth's chunked logit computation processes the loss over mini-batches, materializing only a fraction of the logit tensor at any time. The result: 9.8GB for the same computation. A further detail: Unsloth discovered that when chunking across the batch dimension, the existing gradient checkpointing logic did not offload hidden state activations correctly, the activations silently accumulated in VRAM without being freed. They fixed this with explicit offload logic outside the model's forward pass. The bug existed in all other GRPO implementations. Unsloth found it while building the memory profiler for their own system.

TL;DR For Engineers

Unsloth is a Triton kernel replacement library, not a training framework, it patches HuggingFace Transformers modules at load time, leaving the training API identical

Core gains come from three sources: fused RoPE/MLP Triton kernels (2–3x faster), async gradient checkpointing to CPU RAM (saves 50–370GB in GRPO), and uncontaminated sequence packing (up to 5x throughput on short-heavy datasets)

GRPO memory: standard implementations need 78.3GB for 8 generations at 20K context; Unsloth needs 9.8GB for the same computation via chunked logit materialization

QLoRA (4-bit) is not recommended for MoE architectures, use BF16 LoRA instead; multi-GPU support is functional but not yet mature

For solo GPU fine-tuning on consumer or mid-tier hardware, Unsloth is the correct default; for 8+ GPU distributed training with tensor parallelism, use DeepSpeed or FSDP instead

Conclusion: The Kernel Is the Product

Unsloth made a bet that the abstraction layer matters more than the framework layer. HuggingFace Transformers is already excellent as a model API. TRL is already good for training loops. The gap was in the compute primitives underneath, the kernels that run a million times per training run and waste memory on every iteration. By dropping into Triton and rewriting those primitives from scratch, Unsloth shifted the constraint from hardware budget to software craft. A two-person team with deep kernel expertise has made fine-tuning on a $1,600 consumer GPU more capable than what a $15,000 A100 could achieve with off-the-shelf tools. That is not a tooling story. That is an argument about where the value actually lives in the ML stack.

References

Unsloth GitHub Repository — 58.6k stars, Apache-2.0 core

Unsloth Documentation — official docs covering all training modes

Unsloth Free Notebooks — Colab notebooks for every major model

Unsloth: MoE Training 12x Faster Blog — Split LoRA, grouped_mm, Triton MoE kernels

Unsloth: 3x Faster Training with Packing — RoPE kernel, packing algorithm, benchmarks

Unsloth GRPO RL Guide — GRPO memory optimization, chunked logits

Unsloth Long-Context GRPO Blog — 78.3GB vs. 9.8GB GRPO comparison

Unsloth GRPO Long Context Docs — 7x longer context RL benchmarks

Unsloth VLM RL Guide — Vision GRPO, 90% VRAM reduction

HuggingFace Blog: Unsloth — community benchmarks and adoption data

PagedAttention Paper, arXiv 2309.06180 — vLLM integration context for GRPO inference

Unsloth is a Triton kernel replacement library that patches HuggingFace Transformers at load time, delivering 2–5x faster fine-tuning and 70–90% less VRAM versus standard implementations with mathematically exact outputs. Its three core mechanisms, fused RoPE/MLP kernels, async gradient checkpointing to CPU RAM, and uncontaminated sequence packing, compose to make Llama 3 8B QLoRA fine-tuning feasible on a 24GB consumer GPU at under 9GB peak VRAM. The GRPO memory optimization reduces logit materialization from 78.3GB to 9.8GB for 8-generation, 20K-context RL training. The real value is not the speed gain, it is the hardware accessibility shift that moves fine-tuning from A100 territory to consumer GPU territory.

Sponsored Ad

If you enjoy practical AI insights, check out SnackOnAI and support the newsletter by subscribing, sharing, and exploring our sponsored ad — it helps us keep building and delivering value 🚀

88% resolved. 22% stayed loyal. What went wrong?

That's the AI paradox hiding in your CX stack. Tickets close. Customers leave. And most teams don't see it coming because they're measuring the wrong things.

Efficiency metrics look great on paper. Handle time down. Containment rate up. But customer loyalty? That's a different story — and it's one your current dashboards probably aren't telling you.

Gladly's 2026 Customer Expectations Report surveyed thousands of real consumers to find out exactly where AI-powered service breaks trust, and what separates the platforms that drive retention from the ones that quietly erode it.

If you're architecting the CX stack, this is the data you need to build it right. Not just fast. Not just cheap. Built to last.