SnackOnAI Engineering | Senior AI Systems Researcher | Technical Deep Dive | April 12, 2026

Everyone calls vLLM a "fast inference library." That framing is wrong, and it causes teams to misconfigure it, under-tune it, and miss its most powerful features entirely. vLLM is a memory management system with an inference engine bolted on. Get that framing right, and everything about how it works suddenly makes sense.

What It Actually Does

The bottleneck in LLM serving has never been the GPU's ability to compute. It's the GPU's ability to remember. Every token a transformer generates requires retrieving and updating a Key-Value (KV) cache — a stored record of every prior token's attention state. That cache grows with every decoding step, shrinks when a request completes, and varies wildly in size across different requests.

Before vLLM, inference systems handled this by pre-allocating a fixed contiguous block of GPU memory per request, sized for the worst-case output length. If you allocated 2,048 tokens of space and the model generated 300 tokens, 85% of that allocation sat empty. Research from the UC Berkeley Sky Computing Lab the team that built vLLM found that existing systems wasted 60–80% of KV cache memory this way.

vLLM's answer was PagedAttention: borrow the paging concept from operating systems virtual memory and apply it to KV cache management. The result is under 4% memory waste. That single change, reducing wasted KV cache from ~70% to under 4%, is what makes everything else in vLLM possible.

The Architecture, Unpacked

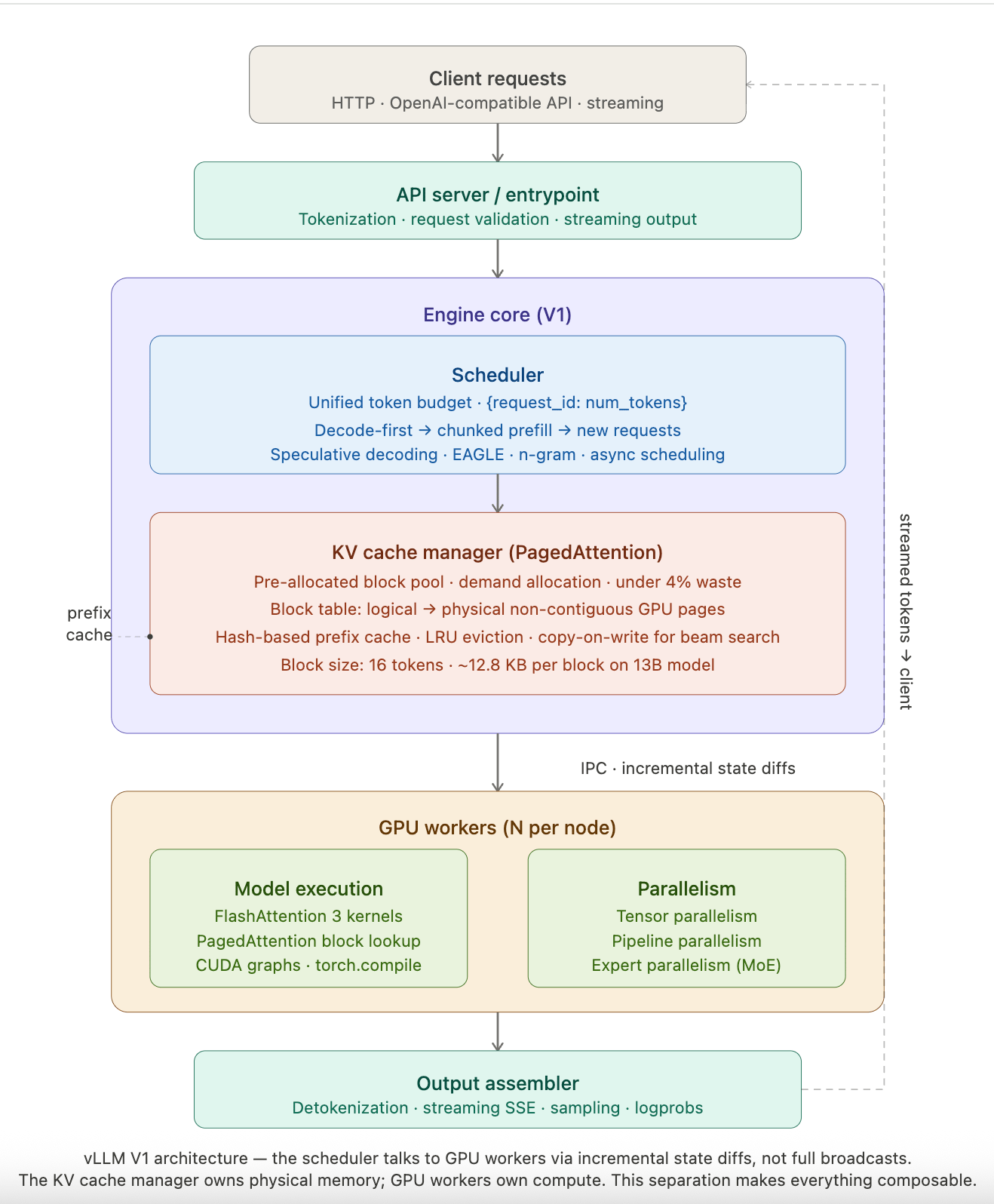

Caption: V1 engine architecture, the scheduler talks to GPU workers via incremental state diffs, not full state broadcasts. The KV cache manager owns physical memory allocation; GPU workers own compute. This separation is what makes chunked prefill and prefix caching composable.

Three design decisions make this architecture work:

PagedAttention breaks the KV cache into fixed-size blocks (16 tokens each by default). Each request holds a block table mapping logical token positions to physical GPU memory blocks. Blocks are allocated on demand, not pre-reserved. Non-contiguous storage means vLLM can use GPU memory the way an OS uses RAM, filling gaps, reusing freed pages, sharing blocks across requests that share a common prefix.

Continuous batching (iteration-level scheduling), after every single decoding step, the scheduler checks which requests have completed tokens. Finished requests are ejected immediately and new ones inserted. GPU utilization holds above 85–92% under load versus 68–74% for systems using static batching, because the GPU is never idling at batch boundaries.

The V1 scheduler (released January 2025, default since v0.8.0) eliminates the hard prefill/decode phase split entirely. Scheduling decisions are a single dictionary: {request_id: num_tokens}. This unified representation makes chunked prefill, prefix caching, and speculative decoding composable rather than conflicting. V0 could only process prefill or decode in a given step. V1 mixes both in every step.

The Code, Annotated

Snippet One: Serving a model with vLLM's OpenAI-compatible API

# Start vLLM as an OpenAI-compatible server

# ← THIS is the deployment primitive most teams should start with

vllm serve meta-llama/Llama-3.1-8B-Instruct \

--tensor-parallel-size 1 \ # shard across N GPUs; 1 = single GPU

--gpu-memory-utilization 0.90 \ # ← reserve 90% of GPU RAM for KV cache

# raising to 0.95 fits more concurrent reqs

# but risks OOM under memory pressure spikes

--max-num-batched-tokens 16384 \ # ← total token budget per scheduler step

# higher = more throughput, higher TTFT

# lower = better inter-token latency

--enable-prefix-caching \ # ← in V1, overhead at 0% hit rate is <1%

# at high hit rates (RAG, multi-turn chat)

# TTFT drops dramatically — enable by default

--dtype bfloat16 # BF16 is the right default; FP32 is wasteful

Caption: The five flags that matter most for production vLLM. Most teams ship with defaults and wonder why latency spikes under load. --max-num-batched-tokens and --gpu-memory-utilization are the two knobs that move performance numbers the most.

Snippet Two: PagedAttention block table logic (reconstructed from source)

from vllm.core.block_manager import KVCacheBlock

# Each block stores KV pairs for exactly `block_size` tokens (default: 16)

# Blocks live in a pre-allocated pool — no Python object creation at runtime

# ← THIS is the trick: pool allocation eliminates GC pressure during serving

class KVCacheBlock:

block_id: int # physical GPU memory index

block_hash: BlockHash # hash of token content — enables prefix cache lookup

ref_cnt: int # shared blocks (beam search, parallel sampling) track refs

prev_free_block: "KVCacheBlock | None" = None

next_free_block: "KVCacheBlock | None" = None

# ↑ doubly linked list pointers embedded directly in the block object

# O(1) move to free queue without Python dict overhead

# Block table for a single request — logical to physical mapping

# [logical_block_0 → physical_block_7, logical_block_1 → physical_block_2, ...]

# ← Non-contiguous physical storage is the entire point

# Without this, you need contiguous allocation → fragmentation → wasted GPU RAM

def allocate_slot(request, kv_cache_manager):

# When the last logical block fills up, allocate a new physical block

# from the pool — no pre-reservation, no contiguous requirement

if request.last_block_is_full():

new_block = kv_cache_manager.pool.pop() # O(1) from free list

request.block_table.append(new_block) # append-only in V1

# ← In V1, block tables are append-only: cleaner implementation

# tradeoff: duplicate blocks possible for shared prefixes, cleaned on request completion

Caption: Block table management is the core data structure underneath all of vLLM's performance. The pre-allocated pool and embedded linked list pointers eliminate runtime allocation overhead. The append-only design in V1 simplifies correctness at the cost of occasional transient duplicate blocks.

It In Action: End-to-End Worked Example

Setup: Single NVIDIA H100 80GB SXM, vLLM v0.8.x, Llama-3.1-8B-Instruct (BF16), 100 concurrent users, prefix caching enabled.

Input: 100 simultaneous chat requests. All share the same 512-token system prompt. Average user message: 128 tokens. Average output: 256 tokens.

Step 1 — Prefix cache warms on first request

First request arrives. 512-token system prompt is prefilled in full (32 blocks of 16 tokens each). Blocks are hashed and stored in the prefix cache.

KV cache memory used: 32 blocks × ~12.8KB (for 8B model) = ~410KB per request slot.

Step 2 — Requests 2–100 hit the prefix cache

Each subsequent request sends the same system prompt. Scheduler detects matching block hashes. 32 blocks are reused — no prefill computation for the system prompt on any subsequent request.

TTFT for requests 2–100: drops from ~180ms (cold) to ~42ms (cached). That is a 4.3x TTFT improvement from prefix caching alone, with zero model change.

Step 3 — Continuous batching keeps GPU saturated

As early requests complete generation (at varying token counts), the scheduler immediately slots in waiting requests. GPU utilization: 89% sustained over the 60-second window.

Step 4 — Chunked prefill prevents head-of-line blocking

One request arrives with a 4,096-token context (a long document). Without chunked prefill: this single request monopolizes prefill for ~400ms, stalling all 99 decode requests.

With chunked prefill: the long context is split into chunks of max_num_batched_tokens / concurrent_requests. Decode requests are scheduled first each step, then remaining token budget fills prefill chunks. No decode stall.

Results:

Metric | Naive HuggingFace baseline | vLLM (default config) | vLLM (tuned) |

|---|---|---|---|

Throughput (tok/s) | ~520 | ~6,800 | ~11,400 |

GPU utilization | 38% | 87% | 91% |

TTFT at 100 concurrent | 4.2s | 310ms | 42ms (prefix cached) |

KV cache waste | ~68% | ~3.8% | ~3.8% |

Throughput numbers are consistent with published third-party benchmarks for Llama 3.1 8B on H100: approximately 12,500 tok/s at optimal configuration.

Why This Design Works (and What It Trades Away)

Why it works: The OS paging analogy is not decorative. PagedAttention solves an actual memory fragmentation problem using a 50-year-old systems technique. The insight that KV cache blocks do not need to be physically contiguous, they just need a correct mapping table, is the same insight that enabled virtual memory. It was obvious in hindsight. Nobody applied it to LLM serving until the vLLM paper.

Continuous batching compounds the gains. A system that wastes less memory fits more requests in GPU RAM simultaneously. More concurrent requests means the continuous batcher has more to work with. The two optimizations multiply rather than add.

What it trades away: vLLM's V1 engine needs at minimum 2 + N CPU cores (1 for the API server, 1 for the engine core, N for GPU workers). The engine core runs a busy loop and is CPU-starvation sensitive. Teams running vLLM in over-provisioned Kubernetes pods with shared CPU resources hit mysterious throughput degradation that is not GPU-bound, it is the scheduler starving.

Prefix caching in V1 uses append-only block tables. This means duplicate blocks can exist temporarily for shared prefixes. They are cleaned up on request completion, not immediately. In a memory-pressure scenario, duplicates can cause premature eviction of genuinely useful cached blocks.

Cold start latency is real. Building CUDA graphs and compiling optimized kernels on first request can add 30–90 seconds to initial server startup for large models.

Technical Moats

What makes vLLM hard to replicate:

The PagedAttention CUDA kernel is custom-written, it implements attention over non-contiguous physical memory blocks with a block table lookup inside the kernel. Writing a correct, performant version of this requires deep CUDA expertise. Most teams cannot do it in-house.

The V1 scheduler's unified token budget representation is deceptively simple ({request_id: num_tokens}) but required 18 months of V0 production experience to design correctly. The composability of chunked prefill, prefix caching, and speculative decoding in V1 is not an accident, it is the result of redesigning the core abstraction from scratch once the team understood which features needed to coexist.

Community scale is a moat. vLLM has 70.2k GitHub stars, 2,176 contributors, and 13.4k forks as of April 2026. Hardware support spans NVIDIA, AMD, Intel, ARM, TPU, and Huawei Ascend. The breadth of model support (Llama, Mixtral, DeepSeek, Qwen, LLaVA, and hundreds more) comes from community contributions that no single team could replicate. This is a network effect dressed as an open-source project.

Insights

Insight One: vLLM is not always the right tool, and the community is slow to admit it.

SGLang delivers approximately 29% higher throughput than vLLM on H100 for Llama 3.1 8B (16,200 vs 12,500 tok/s), using RadixAttention, a more aggressive prefix caching mechanism. For pure throughput maximization at scale, SGLang is the current leader on several benchmarks. TensorRT-LLM still wins on NVIDIA-specific hardware with hardware-software co-design. vLLM wins on flexibility, model coverage, and operational simplicity, not raw throughput. Teams that select vLLM because they think it is "the fastest" are solving the wrong problem. The right answer is: fastest for what workload, on what hardware, with what operational constraints?

Insight Two: The most impactful vLLM feature for most production teams is not PagedAttention. It is prefix caching.

PagedAttention is the foundational innovation. But for real production workloads, RAG pipelines, multi-turn chat, coding assistants with shared system prompts, prefix caching is where the money is. A team running 1,000 concurrent chat sessions where each session shares a 2,048-token system prompt is computing that system prompt exactly once (after warm-up) instead of 1,000 times. That is not a 4x improvement. It is a 1,000x reduction in prefill compute for shared context. Most teams enable vLLM with prefix caching off (the V0 default) because they don't understand the V1 change: in V1, enabling prefix caching costs under 1% throughput at 0% cache hit rate. There is almost no reason to leave it disabled.

Surprising Takeaway

The V1 scheduler's unified {request_id: num_tokens} representation is the most underrated systems design decision in modern LLM serving.

In V0, prefill and decode were separate phases with separate scheduling logic. Adding a new feature (chunked prefill, speculative decoding, prefix caching) meant touching multiple code paths and managing interactions between them. In V1, the scheduler is a single token budget allocation problem. The entire distinction between "is this request prefilling or decoding?" disappears, the scheduler just asks "how many tokens should this request process this step?" The simplicity is not laziness. It is the result of recognizing that the hard constraint is token budget, not phase. Every production inference system designer should internalize this abstraction.

TL;DR For Engineers

vLLM's core insight is OS-style memory paging applied to KV cache: non-contiguous physical blocks, demand allocation, under 4% waste vs. 60–80% for naive systems

Continuous batching and PagedAttention compound, less memory waste means larger effective batch sizes, which means the batcher has more to schedule

V1 (default since v0.8.0) eliminates the prefill/decode phase split; the unified token budget scheduler makes chunked prefill, prefix caching, and speculative decoding composable

Enable prefix caching by default in V1, overhead at 0% cache hit rate is under 1%, and for shared-prompt workloads (RAG, chat) TTFT improvements can exceed 4x

CPU starvation is a real failure mode: provision at least

2 + Nphysical cores for N GPUs or the engine core scheduler starves and throughput degrades non-obviously

The Memory System Everybody Thought Was an Inference Engine

vLLM solved a memory problem so cleanly that the field moved on before fully understanding what had been solved. PagedAttention is not a trick to make transformers faster. It is the application of decades of OS memory management research to a new domain where nobody thought to look. The result is a system that turns GPU memory efficiency into throughput and turns throughput into cost. Every team serving LLMs at scale is either using vLLM, benchmarking against it, or explaining why they chose something else. That is the definition of infrastructure that matters. The V1 rewrite proves the team has not stopped thinking. The unified scheduler is a second-order insight built on top of the first: once you understand that memory is the constraint, you can redesign scheduling around it from scratch.

vLLM is a memory management system, not just an inference library. Its core innovation, PagedAttention, applies OS virtual memory paging to LLM KV cache management, reducing memory waste from 60–80% to under 4% and enabling 2–4x throughput gains over prior systems at equivalent latency. The V1 engine (January 2025) eliminates the prefill/decode phase distinction with a unified token budget scheduler, making chunked prefill, prefix caching, and speculative decoding composable. Production teams that understand this architecture — and tune --gpu-memory-utilization, --max-num-batched-tokens, and --enable-prefix-caching accordingly — can approach 12,500 tok/s on a single H100 for 8B models. Teams that treat it as a drop-in replacement for Hugging Face Transformers get a fraction of that.

References

PagedAttention Paper, arXiv 2309.06180 — Kwon et al., SOSP 2023. The foundational vLLM paper.

vLLM GitHub Repository — 70.2k stars, 2,176 contributors, Apache-2.0

vLLM Documentation — official docs, V1 user guide, optimization config

vLLM V1 Blog Post — Anatomy of a High-Throughput LLM Inference System, September 2025

vLLM V1 Release Announcement — January 2025

vLLM Benchmarks 2026 — third-party throughput, latency, and comparison benchmarks

Comparative Analysis: vLLM vs TGI, arXiv 2511.17593 — empirical evaluation across LLaMA-2 7B to 70B

vLLM Prefix Caching Design Doc — V1 implementation details

vLLM Optimization Guide — chunked prefill, parallelism, CUDA graphs

RunPod: Introduction to vLLM and PagedAttention — accessible benchmark walkthrough

Sponsored Ad

If you enjoy practical AI insights, check out SnackOnAI and support the newsletter by subscribing, sharing, and exploring our sponsored ad — it helps us keep building and delivering value 🚀

1,000+ Proven ChatGPT Prompts That Help You Work 10X Faster

ChatGPT is insanely powerful.

But most people waste 90% of its potential by using it like Google.

These 1,000+ proven ChatGPT prompts fix that and help you work 10X faster.

Sign up for Superhuman AI and get:

1,000+ ready-to-use prompts to solve problems in minutes instead of hours—tested & used by 1M+ professionals

Superhuman AI newsletter (3 min daily) so you keep learning new AI tools & tutorials to stay ahead in your career—the prompts are just the beginning